The tech world is currently obsessed with "how smart AI can be." From large language models to multi-agent systems, the race is on to build more capable "brains." However, for the insurance industry and the broader commercial world, a far more critical question remains unanswered: Once an AI is smart enough to act, how do we manage the consequences of those actions?

We are entering the era of Agentic Commerce, where AI shifts from a "recommendation engine" to an autonomous "actor." But as we move from low-stakes tasks (like ordering coffee) to high-stakes institutional actions (like claims automation or asset allocation), we face a structural wall.

The reality is that a $10 trillion Agentic Economy cannot exist without the insurance industry. Why? Because authority can be delegated, but accountability cannot be outsourced. For AI to truly participate in commerce, it needs more than better code; it needs the "institutional road rights" that only the insurance and financial sectors can provide.

The Underwriting Prerequisite: Causal Delegation

Most AI experiments fail in real-world transactional environments because they rely on "correlation-based" models. These models are inherently unpredictable because they don't understand why they are acting; they only know what is likely to happen next based on patterns.

For an insurer, a "black box" agent is un-insurable. You cannot price the risk of an entity that doesn't recognize its own boundaries.

This is where the design layer becomes a prerequisite for underwriting. I argue that trust is an engineering outcome achieved through Causal Delegation. This involves three core requirements:

1. Causal Authorization: The agent's actions must stay within a human-defined "Causal Space."

2. Boundary Clarity: The agent must possess a "Self-Awareness of Authority"—knowing exactly where its right to act ends.

3. Reversible Design: A "Circuit Breaker" mechanism that allows the agent to hand control back to a human when it encounters a "Causal Shift" (a situation its logic cannot resolve).

From an actuarial perspective, these are not just technical features; they are risk mitigation controls. Only when an AI's behavior is logically bounded can the "Residual Risk" become measurable and, therefore, priceable.

Finance: The Gateway of Action

Even with a perfect design, AI actions remain in a sandbox until they interact with the financial system. In the Agentic era, finance serves as the "Action Gateway."

Current verification systems (like OTP or SMS) are human-centric and fail in an automated economy. We are seeing a transition toward tokenized authorization, a concept mirrored in the recent collaborations between Google and Mastercard to standardize how AI agents interact with payment gateways. By issuing a restricted digital token, the financial institution constrains the agent's spending power within specific causal boundaries.

When the financial system recognizes an AI agent's "status" and its "stop" commands as valid legal instructions, the agent transitions from a demo to a commercial reality. But even then, who pays when the system fails?

The Insurance Endgame: Pricing the Residual Risk

This is where the Agentic Insurer finds its greatest opportunity.

Even the most robust design cannot eliminate 100% of uncertainty. In risk management, this is known as "Residual Risk." The true function of insurance is to transform these unbearable potential losses into a predictable, manageable cost: the premium.

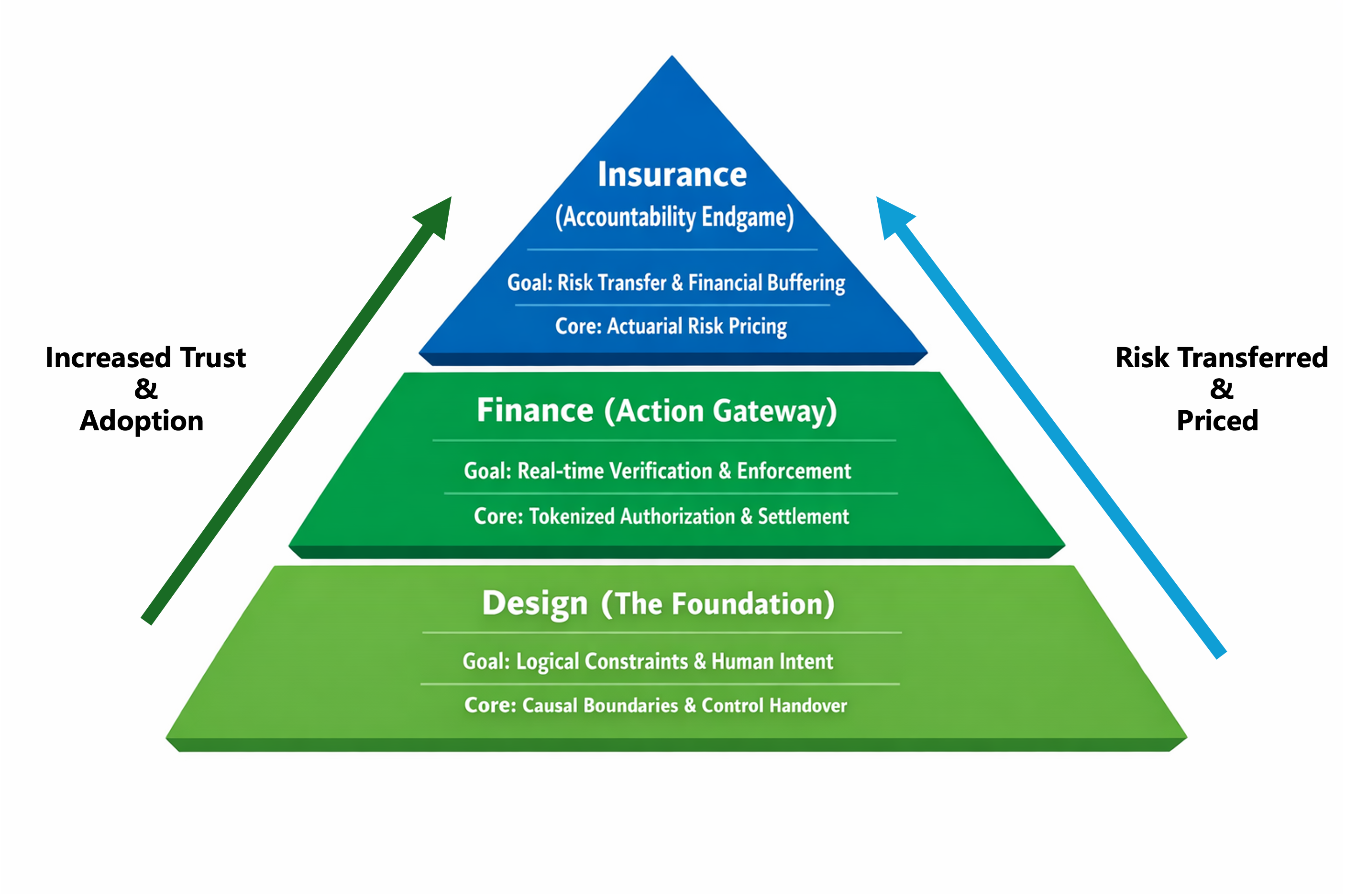

As shown in the Accountability Pyramid, trust is built through three layers of structural filtering:

- The Design Layer resolves the logic of AI actions.

- The Financial Layer provides the real-time road rights and security gates.

- The Insurance Layer provides the final financial backstop.

Figure 1 - The Accountability Pyramid of Agentic Commerce: The three-layer structural filtering for building systemic trust in AI agents.

When the insurance industry can accurately price the behavior of an AI agent, it essentially provides that agent with a "Credit Certificate." It tells the world: "This agent is designed so rigorously that we are willing to back its failures with our balance sheet."

Conclusion: From Risk Managers to Credit Providers

The competition in Agentic Commerce will eventually shift from model capability to credit systems. The most powerful agents will not be those with the most parameters, but those that can clearly present their boundaries and complete the responsibility loop within an institutional framework.

Finance is the prerequisite for all transactions, and insurance is the backstop for unknown risks. When we complete the loop in these two most conservative and rigorous fields, we will have truly traveled the path from the reconstruction of action rights to the realization of trusted, systemic action.

We have moved beyond the stage of merely designing how AI should act. We are now in the business of backing those actions with the institutional credit that only insurance can provide.