TLDR: Deploying agentic AI without governance infrastructure accumulates regulatory and operational exposure faster than most carriers recognize. Carriers scaling with confidence are those that made governance foundational and not remedial.

The Governance Infrastructure Insurers Need

Agentic AI is taking on consequential decisions across underwriting and claims at unprecedented speed and scale. The governance infrastructure at most insurers has not kept pace.

A simple prompt change - a few lines of text updated in a configuration file - can alter how an AI agent reasons about risk across every submission it processes. In a traditional predictive model, the equivalent change requires a full retraining cycle - weeks of documented work, validation runs, and a formal change record. In an agentic system without governance infrastructure, the same functional impact happens with no audit trail. Regulatory and operational exposure accumulates as a result.

Organizations scaling agentic AI with confidence have recognized this gap early and built the infrastructure to close it - because the ability to answer basic questions about their AI systems is a precondition for operating them responsibly at scale.

Why Agentic AI Strains Traditional Model Governance

Insurance organizations have spent years building model risk management capability for predictive AI - pricing models, fraud scores, and reserve estimates. These systems are well understood in governance terms. They take defined inputs, apply learned parameters, and produce a single output that a human then acts on.

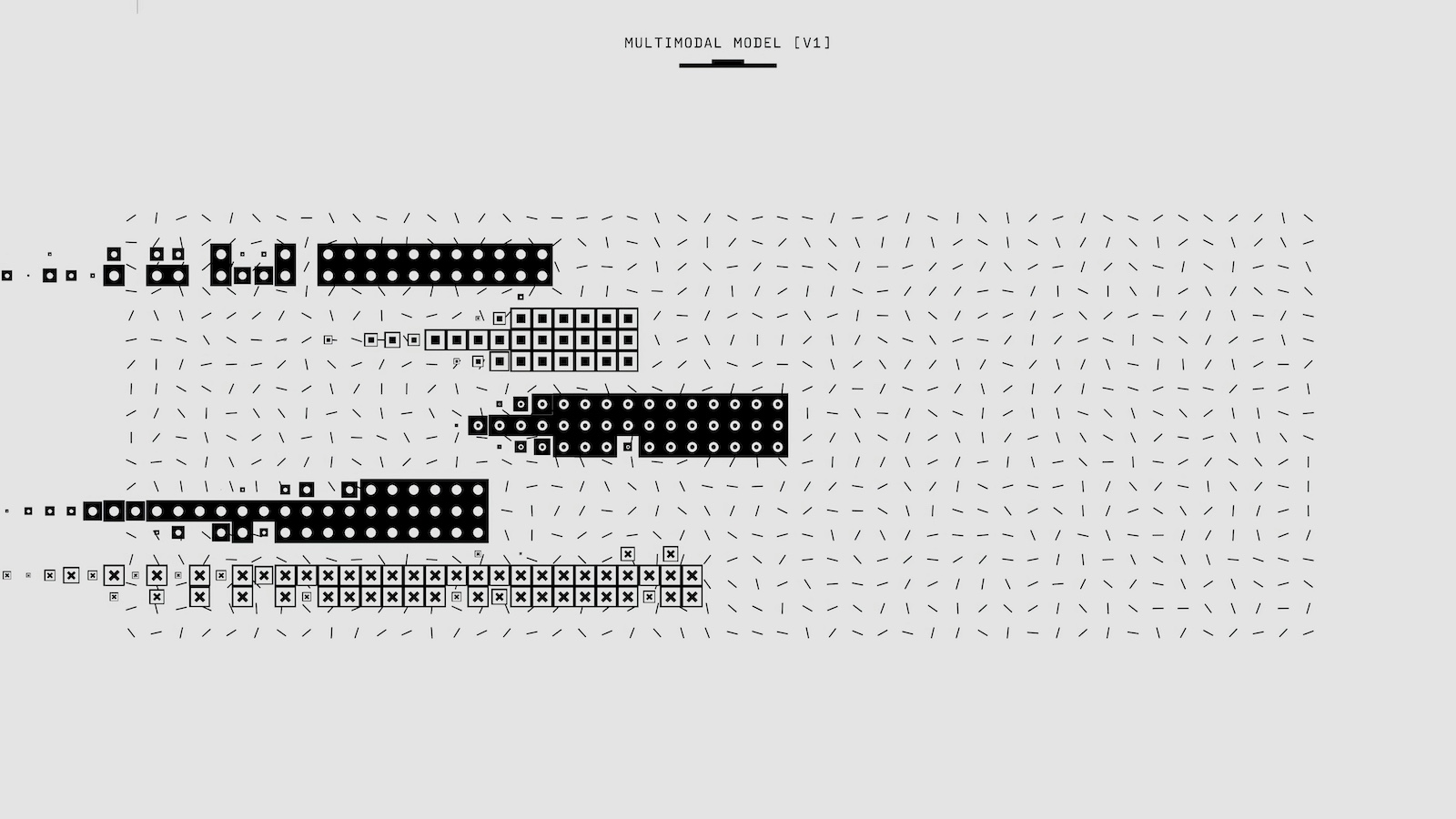

Agentic systems change this structure in fundamental ways. Reasoning chains span multiple steps - querying data, evaluating evidence, calling external tools, forming intermediate conclusions - before producing a final result. A simple input-output log is no longer adequate.

Prompts are the governing parameters for AI agents. Changing a system prompt is functionally equivalent to changing a model's weights. Tool calls are data transactions that can potentially send confidential information to third-party systems. Human oversight placed only at the end of the workflow misses the dozens of consequential micro-decisions made along the way.

What Regulators Are Expecting

SR 11-7 is the de facto governance baseline. State insurance regulators are applying its principles - model inventory, independent validation, change management documentation, continuing monitoring - in examination practice. Any carrier deploying AI that influences underwriting or claims decisions should treat SR 11-7 as the minimum standard.

The NAIC AI Model Bulletin's eight principles are now shaping market conduct exams. Explainability carries the sharpest operational bite: if an AI system influenced a coverage decline, the insurer must be able to explain why in specific, contemporaneous terms. Colorado SB21-169 adds testing for proxy discrimination, documentation of external data sources, and annual certification. Similar requirements are advancing in California, New York, and elsewhere.

One pressure that did not exist two years ago is now concrete - carriers without documented AI governance frameworks are facing coverage exclusions and premium increases on their own AI liability policies. The industry that applies governance scrutiny to its insureds is now applying it to itself.

Governance Capabilities

A governance framework for agentic AI requires six distinct capabilities. Each addresses a specific gap in how these systems are built, operated and governed.

- Asset registry - Answers the first question regulators ask: what AI is in production, who owns it, and what version is live. Every agent, task, prompt, and tool is stored as a versioned database record - not hidden in code. SR 11-7's model inventory requirement and the NAIC transparency principle both resolve to this capability.

- Lifecycle framework - Enforces the change management discipline that agentic systems otherwise lack. Every asset version moves through a defined sequence of states - from draft through shadow deployment to champion - with human approval gates at the points that matter. A prompt change cannot reach production without the same controls applied to a code change.

- Testing & validation - Replaces ad-hoc demonstration with structured evidence. Before any agent version reaches production, it is tested against ground-truth datasets labeled by subject-matter experts, producing precision, recall, and fairness metrics stored against the specific version. Colorado SB21-169's bias testing requirement and SR 11-7's independent validation requirement both have a direct answer here.

- Execution control - Ensures that what runs in production is exactly what governance approved. At runtime the framework pulls the current champion configuration from the governed registry. Agents can access only authorized tools and approved parameters. Governance is enforced at execution, not assumed after the fact.

- Decision logging - Produces the contemporaneous record that the NAIC explainability requirement and Colorado's adverse-action provisions demand. Every AI invocation is logged with the exact prompt version, inference parameters, tool calls, inputs, and output. When a market conduct examiner asks how a specific decision was made, the answer is a query, not a reconstruction.

- Compliance & reporting - Makes the governance data useful to the people who need it. Model inventory reports, model cards, override-rate analysis, and regulatory evidence packages are generated on demand from the records the other five components accumulate, not assembled manually when the examination notice arrives.

The Infrastructure Argument

Policy documents alone cannot operationalize these capabilities. A policy requiring documented approval for all agent changes creates the obligation but not the mechanism. Under operational pressure, informal processes prevail.

The insurance industry has already solved an analogous problem in actuarial pricing systems. Algorithms are versioned, changes require documented approval, prior versions are retained for audit, and the system generates its own compliance record. No one would consider deploying a new rating algorithm by editing a configuration file with no version control. That standard of infrastructure is exactly what is needed for AI agents.

When agent behavior is embedded in code, compliance teams cannot access it without engineering support. Storing agent definitions as versioned configuration records changes this - any authorized reviewer can see exactly what instructions any agent version was operating under at any moment in time. Built on that foundation, the framework can enforce the lifecycle mechanically, log every decision with the version that produced it, surface override patterns automatically, and generate regulatory evidence on demand.

The carriers that have built this treat governance as an engineering problem, not a policy exercise.

Where to Start

Start with the agent registry. For each AI system in production, name it, document what it does, record the live version, and identify its owner. Add prompt version control before the next agent change, and decision logging before the next production deployment. Governance built incrementally as infrastructure - before the examination, before the finding, before the failure - is faster to production and more durable than governance assembled in response to one.

The regulatory direction is clear, and the examination questions are already being asked. The difference between carriers that answer them confidently and those that cannot is infrastructure that existed before the question arrived.