Insurance rests on a single promise: pay now, and we will protect you later. That promise depends entirely on trust — trust that the premium is fair, the claim will be honored, and the humans and systems on the other side are acting in good faith. That trust is fracturing.

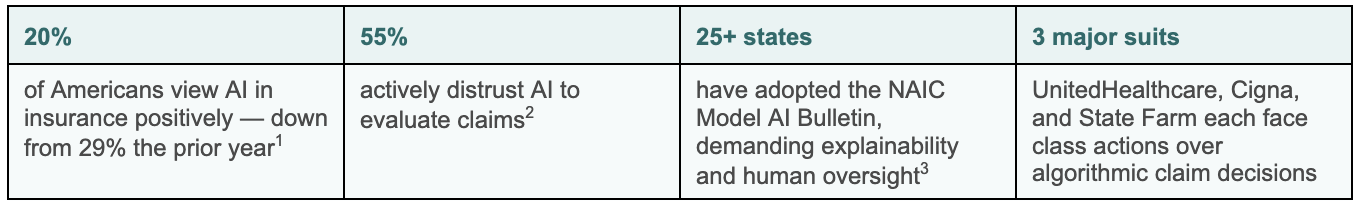

THE STAKES

The industry is spending billions on AI while trust erodes. Why? Because we have been measuring trust as a series of isolated, bilateral relationships rather than as a living, interdependent system.

THE DYADIC TRAP

A systematic review of 66 studies (N = 31,198) found that 74% of existing trust frameworks remain dyadic in measuring trust in the AI, the human adjuster, or the outcome, each in isolation. None can capture what happens when an opaque algorithm denies a claim, a human rubber-stamps it, and a policyholder loses faith not just in that insurer but in the entire industry.

Insurance doesn't work in dyads. It works in triads.

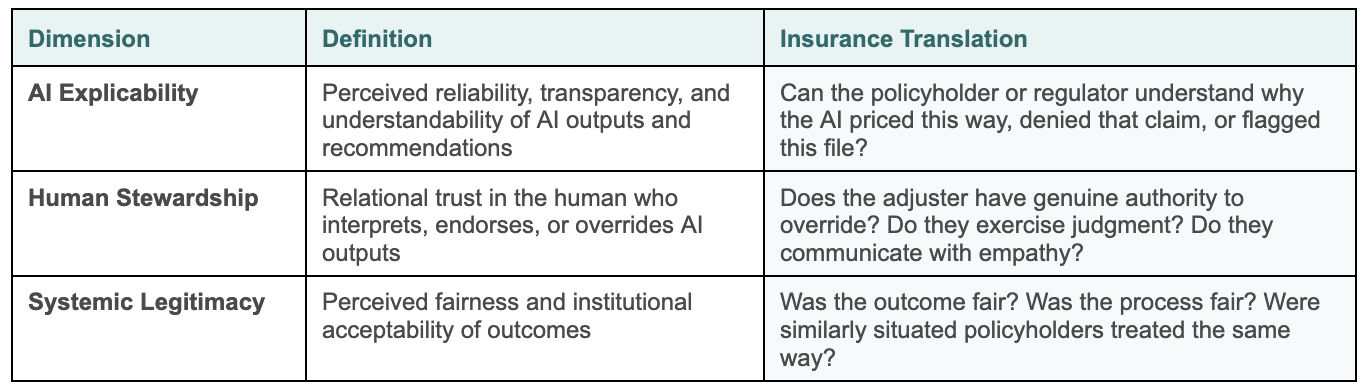

THE TRUST ECOLOGY FRAMEWORK

The Trust Ecology Framework treats trust as an emergent property of three interdependent dimensions:

The critical insight is interdependence. These dimensions continuously influence each other through a mechanism called attributional reappraisal: when policyholders perceive unfair outcomes (low Systemic Legitimacy), they retroactively revise trust in the AI and in the human steward downward, regardless of prior performance evidence. Low legitimacy poisons the entire system. This is what dyadic models cannot see.

THE FRAMEWORK IN ACTION: FOUR DEFINING CASES

01 UnitedHealthcare — nH Predict Algorithm — Class action, 2023, continuing

The lawsuit alleges a 90% error rate — nine in ten denied claims overturned on appeal, while staff were reportedly pressured to keep patient stays within 1% of the algorithm's prediction. Only 0.2% of policyholders ever filed appeals — suggesting the process was designed to overwhelm, not to remedy.

Triadic read:

• AI Explicability: Opaque denials; patients could not understand why they were cut off

• Human Stewardship: Clinical judgment subordinated to machine output

• Systemic Legitimacy: 90% overturn rate signals systemic failure — fracture cascade followed

When patients discovered appeals succeeded, they retroactively lost trust in the AI, the insurer, and the entire Medicare Advantage system — attributional reappraisal in action, cascading across all three dimensions simultaneously.

02 Cigna — PxDx Algorithm — Class action, 2023, advancing

A ProPublica investigation found Cigna denied over 300,000 claims in two months of 2022, with physicians averaging 1.2 seconds per claim — effectively no individual review. One medical director signed off on 60,000 denials in a single month.

Triadic read:

• Human Stewardship: Complete collapse — review was a rubber stamp, not a safeguard

• AI Explicability: Logic hidden; patients could not understand denials

• Systemic Legitimacy: 300,000 denials without proper medical review

This is not just an AI problem. The human failure is arguably worse — and attributional reappraisal means the Stewardship collapse retroactively poisons trust in the AI as well. Dyadic models that measure the two independently miss this entirely.

03 State Farm — Alleged Racial Bias — Huskey v. State Farm, pending

Plaintiffs allege State Farm's claims algorithms disproportionately flagged Black homeowners' claims — 39% more likely to be asked for extra documentation, with claims taking months longer to resolve. Fair Housing Act violations alleged.

Triadic read:

• Systemic Legitimacy: Racially disparate outcomes poison trust at a societal level

• Human Stewardship: Adjusters followed algorithmic flags without independent judgment

• AI Explicability: Retroactively redefined as inherently biased; adjusters as complicit

04 Nippon Life v. OpenAI — Filed March 2026, N.D. Illinois

A former disability claimant uploaded her lawyer's correspondence to ChatGPT, fired her attorney, then filed 21 motions and eight notices courts found served no legitimate legal purpose. Nippon Life seeks $300,000 compensatory and $10 million punitive damages.

Triadic read:

• AI Explicability: ChatGPT appeared authoritative; user could not distinguish information from legal advice

• Human Stewardship: Zero qualified human in the loop in a high-stakes legal process

• Systemic Legitimacy: Abuse of process and real financial harm to a third-party insurer

The first insurance lawsuit in which AI's role in eroding all three trust dimensions simultaneously is the central claim.

EXTENSION: WHEN THE AI IS THIRD-PARTY

The Nippon Life case surfaces an important boundary condition: the Trust Ecology Framework applies even when the eroding AI is not insurer-controlled. In cases involving third-party platforms — consumer chatbots, embedded advice tools, or AI legal assistants — the triad remains intact, but the locus of Human Stewardship shifts. Where an insurer's adjuster once bore stewardship responsibility, accountability now migrates to platform design: the guardrails, disclosures, and refusal behaviors built into the AI itself. An insurer that deploys or integrates a third-party AI tool inherits a stewardship obligation for how that tool presents itself to users — whether it clearly distinguishes information from advice, whether it escalates appropriately to a qualified human, and whether its outputs are auditable when things go wrong. The framework, therefore, extends naturally to AI governance in distribution, claims assistance, and customer-facing automation: the three dimensions do not change, but the responsible steward must be explicitly identified.

WHAT A TRIADIC DIAGNOSTIC ENABLES

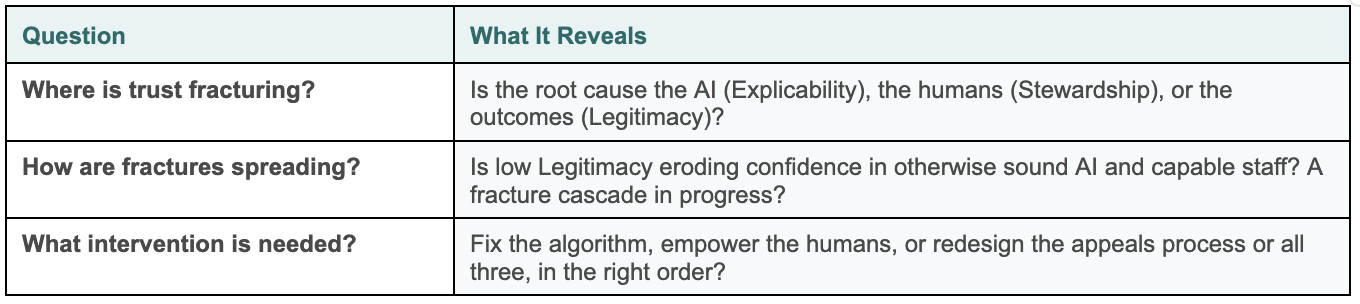

Current metrics — NPS, satisfaction scores, cycle times — measure symptoms, not the system. They cannot answer three questions now existential for the industry.

A trust ecology scale measuring AI Explicability, Human Stewardship, Systemic Legitimacy, and their interconnections across 19 items can operationalize these diagnostics into a quarterly trust health dashboard by product line, region, or customer segment.

REGULATORY ALIGNMENT

Regulators are now explicitly demanding all three dimensions:

• EU AI Act — transparency (Explicability) and human oversight (Stewardship) for high-risk AI

• NAIC Model AI Bulletin (25+ states) — governance, fairness, explainability, auditability

• New York DFS Circular Letter 2024-7 — proof that AI does not proxy for protected classes (Legitimacy)

• Colorado Regulation 10-1-1 — quantitative fairness testing

The scale aligns with all four frameworks, allowing insurers a defensible, evidence-based way to demonstrate they are monitoring trust as a system, not just checking compliance boxes.

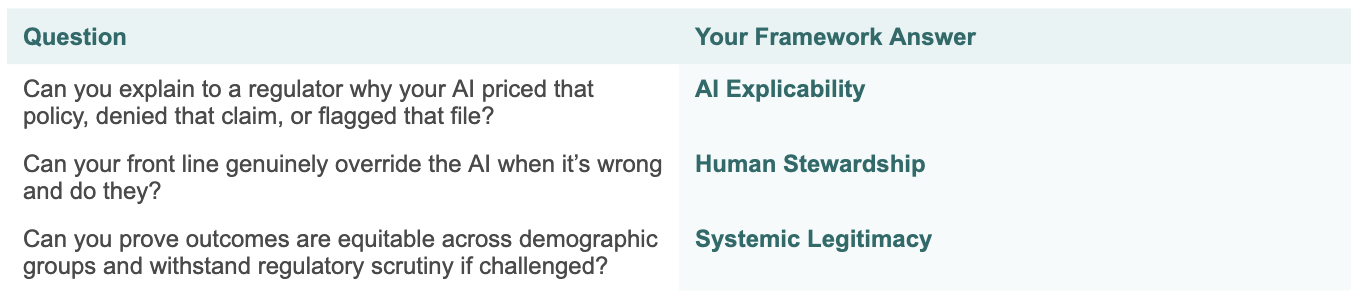

THREE QUESTIONS EVERY INSURER SHOULD ASK TODAY

If you cannot answer yes to all three, you have a trust fracture, and you are likely measuring it with the wrong instrument.

UnitedHealthcare, Cigna, State Farm — these are not cautionary hypotheticals. They are the framework in vivo.

A triadic diagnostic would have given leaders in each organization early warning, precise diagnosis, and targeted repair options before the lawsuits, the headlines, and the consumer trust collapse. The future of insurance will not be defined by algorithms alone but by how responsibly we govern them, and whether we finally measure trust the way it actually works: as a triadic, interconnected, living system.

REFERENCES

[1]Insurity. (2025). 2025 P&C Consumer Survey: AI in Insurance. Hartford, CT. Online survey of 1,000+ US adults, January 2025. Available at: insurity.com

[1]YouGov. (2025). Are Americans Ready to Trust AI with Their Health Insurance? YouGov Surveys: Serviced. N = 1,181 insured US adults. Available at: yougov.com

[1]National Association of Insurance Commissioners (NAIC). (2023). Model Bulletin on the Use of Artificial Intelligence Systems by Insurers. Adopted by 25+ US states as of Q1 2026.

[1]Hor, R. (2026). Trust Ecology Framework: Systematic Review (N = 31,198; 66 studies). Unpublished doctoral dissertation, Saint Mary’s University.