Picture the call.

A state insurance commissioner's office. Your legal team. A customer's attorney. An AI-generated claim denial that affected someone's home, their health, and their livelihood. The question on the table is not whether your model was accurate. The question is who in your organization reviewed that specific decision, what they actually checked, and where the documentation is.

You look around the room.

The data science team points to the risk function. The risk function points at the business unit. The business unit points at the model. The model has no name. The model cannot be deposed. The model's directors and officers (D&O) liability policy does not exist.

Yours does.

The question moving through every insurance boardroom right now is not whether your AI works. It is whether you can prove a human being — a named, accountable, documentable human being — was genuinely in the loop when it didn't.

I have spent two decades working inside financial services organizations across North America, Asia Pacific, and EMEA — in insurance, banking, and enterprise technology. I have been in the rooms where this question lands. The silence it produces is not incompetence. It is the sound of an industry that built extraordinary AI capability and forgot to build the accountability architecture around it.

That silence is becoming expensive.

Your Accuracy Dashboard Is Not a Defense

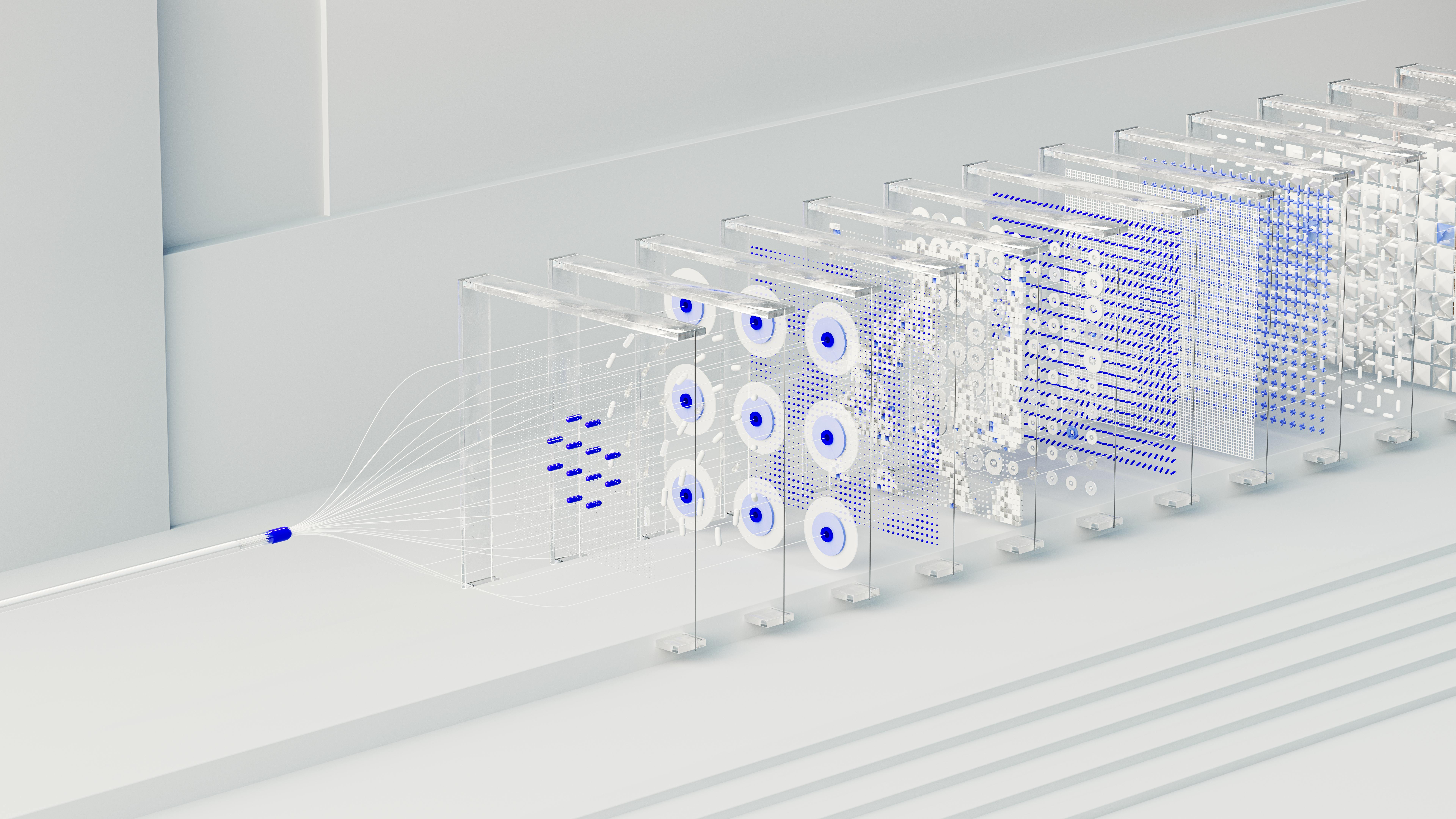

Here is what your AI governance documentation almost certainly shows: model performance metrics. Accuracy rates. Loss ratios. Straight-through processing volumes. Fraud detection rates. These numbers are real, and the investment behind them is genuine.

Here is what your AI governance documentation almost certainly does not show: the name of the human who reviewed the decision that is now in dispute. What they were trained to look for. How long they spent on it. Whether they had the authority — and the actual expectation — to override the model's recommendation.

Those are two entirely different documents. Most insurers have the first. Almost none have the second.

Under the EU AI Act, OSFI B-15, and SR 11-7, the second document is what matters. Regulators are not asking whether your model performs well in aggregate. They are asking whether a specific decision — the one in front of them — had meaningful human oversight. Meaningful. Not ceremonial. Not a click-through.

Accuracy metrics tell you how often the AI is right. They tell you nothing about whether the human in the loop actually understood what they were approving.

Most insurers have the checkbox. Very few have a defensible record. That gap — between the checkbox and the defensible record — is where the liability lives.

What Happened in the Netherlands Will Happen Here

In 2020, the Dutch government's benefits AI flagged 26,000 families as suspected fraud. Most were innocent. The algorithm ran for years. The humans trusted it. No one built a mechanism for those humans to meaningfully question what the system was telling them.

By the time the full picture emerged, families had lost homes. Children had been taken into care. Careers had been destroyed. The prime minister resigned. The government fell.

Not because the AI was malicious, but because no one could name the human responsible for any specific decision. The accountability architecture was missing. And when it was missing at scale — across 26,000 families — there was no one to hold accountable except the institution itself.

That story is not a European warning. It is a preview.

The same structural failure exists in US healthcare AI, in automated claims systems, in credit decision making, and in hiring algorithms. The technology performs as designed. The human layer — the named, documented, trained, empowered human layer — is absent or ceremonial. When something goes wrong at scale, the institution absorbs the liability because no individual can be identified as responsible.

Unfair AI doesn't just break trust between a customer and a machine. It collapses trust across your entire organization — retroactively. And the collapse travels up the chain until it finds someone with a name.

That name will be on your org chart. It may be yours.

Run This Test Before You Read the Next Section

Pull three recent AI-denied claims from your system. Any three.

For each one, answer these questions: Who is the named human reviewer in the audit trail? What specific aspects of the AI recommendation did they evaluate? Is there documentation showing they genuinely interrogated the output — not just approved it?

If you can produce complete, defensible answers for all three in under 10 minutes, your AI governance is in reasonable shape.

If you cannot — if the trail goes cold at "the system flagged it" or "the team reviewed it" — you have just identified your exposure. That is not a criticism. It is a diagnostic. It is also, increasingly, what plaintiff attorneys run on insurers before they file. What D&O underwriters are beginning to check at renewal. What state insurance commissioners are starting to request in market conduct examinations.

The gap you just found is the gap this article is about.

Three Ways to Close the Gap — Before Someone Closes It for You

Name the human — in the system, in the record, in the audit trail. Every high-stakes AI decision — claim denial, underwriting declination, fraud escalation, pricing exception — needs a named individual reviewer, not a team, not a role, not a function. A person. Because when the commissioner's office calls, they will ask for that person. If you cannot produce a name, you cannot produce a defense.

Build the authority to say no — and document when it is used. The difference between meaningful oversight and rubber-stamping is whether your reviewers have explicit authority to override the AI, training to know when they should, and time to exercise that judgment. If your straight-through processing rates are above 95%, ask yourself honestly: is that efficiency, or is it the absence of human judgment? Regulators are beginning to ask the same question.

Audit fairness separately from accuracy. Your model validation process measures performance. It does not measure whether the outcomes your AI produces are perceived as fair by the people affected. Consistency of treatment across demographics. Accessibility of recourse. Clarity of explanation. These are legitimacy measures and they require a different audit. The insurers who build this capability now will be positioned as leaders. The ones who wait will be building it during an investigation.

The Verdict Is Already Being Written

The insurance industry did not get here through negligence. It got here through speed. AI capability moved faster than governance frameworks. Deployment timelines outran accountability infrastructure. The checkbox appeared because it was faster than the defensible record. None of that was malicious.

But 2025 is not 2019. The EU AI Act is not a distant concern — it is setting the global documentation standard, and US regulators are actively incorporating its logic. D&O underwriters are beginning to ask about AI governance at renewal. state insurance commissioners are starting to include AI decision audit trails in market conduct examinations. Class action attorneys are looking for patterns in AI-driven denials.

The verdict on your AI governance is being written right now — by regulators, by courts, by customers who received a decision they couldn't understand or challenge. It is being written in the audit trails you do or do not have. In the names, you can or cannot produce. In the documentation that proves a human being was genuinely, meaningfully in the loop.

The algorithm will not appear in that verdict. It cannot be deposed. It cannot be held accountable. It does not have a name.

You do.

"The algorithm decided" is not a name. It's a future deposition headline.