This is part two of a series of five on the topic of risk appetite and its associated FAQs.

The author believes that enterprise risk management (ERM) will remain locked in organizational silos until boards are mobilized in terms of their comprehension of the links between risk and strategy. This is achieved either through painful and expensive crises or through the less expensive development of a risk appetite framework (RAF). Understanding risk appetite is very much a work in progress for many organizations.

The first article made a number of observations of a general nature based on experience in working with a wide variety of companies. This article describes the risk landscape, measurable and unmeasurable uncertainties and the evolution of risk management.

The Risk Landscape

Lessons learned following the great financial crisis (GFC) include the importance of establishing an effective risk governance framework at the board level. In essence, two key questions must now be addressed by boards.

First, do boards express clearly and comprehensively the extent of their willingness to take risk to meet their strategic and business objectives? Second, do they explicitly articulate risks that have the potential to threaten their operations, business model and reputation?

To be in a position to provide credible answers to these fundamental questions, we must first seek to understand the relationship between risk and strategy.

It is RMI’s experience that risk and strategy are intertwined. One does not exist without the other, and they must be considered together. Such consideration needs to take place throughout the execution of strategy. Consequently, it is vital that due regard is given to risk appetite when strategy is being formulated

1

Crucially, risk is now defined as "the effect of uncertainty on objectives."

2

It is clear, therefore, that effective corporate governance is strategy- and objective-setting on the one hand, and superior execution with due regard for risks on the other. This particular landscape is what we in RMI refer to as the interpolation of risk and strategy. For this reason, RMI describes board risk assurance as assurance that strategy, objectives and execution are aligned. Alignment is achieved through operationalization of the links between risk and strategy, which will be described in the final article in this series.

Before further discussion, however, we would like to draw attention to observations based on our practical experience that give cause for concern, namely:

1. Risk appetite: While we now have a globally accepted risk management standard

3 and sharper regulatory definition of effective risk management for regulated organizations, there is as yet much confusion, and neither a consensus nor an internationally accepted guidance, as to the attributes of an effective risk appetite framework.

2. Risk reporting: In relation to risk reporting, two significant matters arise:

Risk registers that are primarily generated on the basis of a compliance-centric requirement, as distinct from an objectives-centric

4 approach, tend to contain lists of risks that are not explicitly associated with objectives. As such, they offer little value in terms of reporting on risk performance.

Note: RMI supports the adoption of a board-driven, objectives-centric approach

5 to reporting and monitoring risks to operations, the business model and reputation.

Risk registers and other reporting tools detail known risks and what we know we know. They tend not to detail emerging or high-velocity risks that have the potential to threaten the business model. As such they tend to be of limited value in terms of reporting or monitoring either unknown knowns

6, or unknown unknown

7 risks. This is a matter that should give boards cause for concern given pace of change, hyper-connectivity and the disruptive nature of new technologies.

3. Risk data governance: The quality, rigor and consistency in application of accounting data that is present in well-managed organizations does not equally exist in those same organizations in the risk domain.

The responsibility of directors to use reliable accounting information and apply controls over assets, etc. (internal controls) as part of their legally mandated role extends equally to information pertaining to risks that threaten financial performance. The latter is not, however, treated in an equivalent fashion to accounting data. Whereas the integrity of accounting data is assured through the use of proven and accepted accounting systems subject to audit, information pertaining to risks typically relies on the use of disparate Excel spreadsheets, word documents and Power Points with weak controls over the efficacy of copying and pasting of data from one level of report to another.

Weaknesses and failings in risk data governance can be addressed in much the same way as for other governance requirements.

For example:

a. Comprehensive training for business line managers and supervisors on:

- (Risk) Management Processes,

- (Risk) Vocabulary,

- (Risk) Reporting,

- Board (Risk) Assurance Requirements

b. Performance in executing (risk) management roles and responsibilities included in annual performance appraisals,

c. System

8 put to process through the use of database/work flow solutions, providing an evidence basis of assurance that:

- The quality, timing, accessibility and auditability of risk performance data is as rigorously and consistently applied as that for accounting data,

- Dynamic management of risk data (including risk appetite/tolerance/criteria) can be tracked at the pace of change

- Tests can be applied to the aggregation of risks to objectives at the pace of change and prompt interdictions applied when required,

- Reports, or notification, of significant risks are escalated without delay, and without risk to the originator of information.

4. Lack of understanding of the nature of the risks that need to be mastered in the boardroom:

Going back to our definition of risk as the effect of uncertainty on objectives: There are many types of objectives -- for example, economic, financial, political, regulatory, operational, customer service, product innovation, market share, health safety, etc. -- and there are multiple categories of risk. But what is uncertainty?

Uncertainty

9 is the state, even partial, of deficiency of information related to understanding or knowledge of an event, its consequence or its likelihood.

There are essentially two kinds of uncertainty:

1. Measurable uncertainties: These are inherently insurable because they occur independently (for example, traffic accidents, house fires, etc.) and with sufficient frequency as to be reckonable using traditional statistical methods.

Measurable uncertainties are treated individually through traditional (risk) management supervision, and residually through insurance.

Measurable uncertainties are funded out of operating profits.

2. Unmeasurable uncertainties: These are inherently un-insurable using traditional methods because of the paucity of reliable data. For example, whereas we can observe multiple supply chain and service interruptions, data breaches, etc. they are not sufficiently similar or comparable to be soundly put to a probability distribution and statistically analyzed.

Un-measurable uncertainties are treated on a broad basis through organizational resilience. For the top 5-15 corporate risks

10 that are typically inestimable in terms of likelihood of occurrence, the organization seeks to maintain an ability to absorb and respond to shocks and surprises and to deliver credible solutions before reputation is damaged and stakeholders lose confidence.

Un-measurable uncertainties are funded out of the balance sheet.

The hyper-connected and multispeed world in which we live today has driven the effect of un-measurable uncertainties on company objectives to unprecedented, heights, and so amplified the risk potential enormously.

5. Urgent need to recognize the mission-critical importance of building and preparing management to always be prepared to offer credible solutions in the face of unexpected shocks and surprises

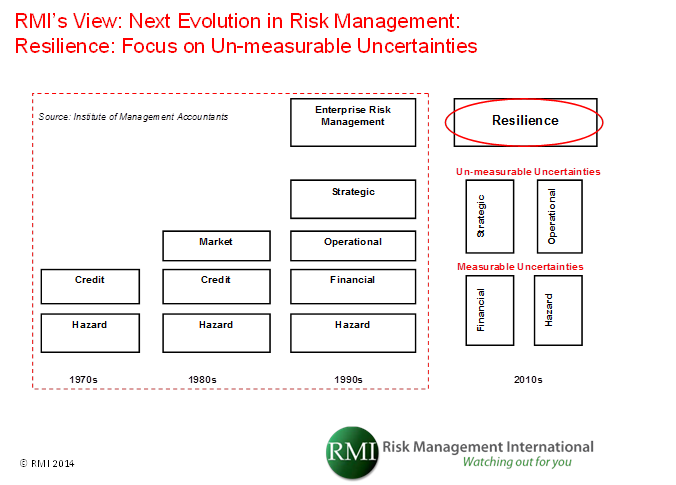

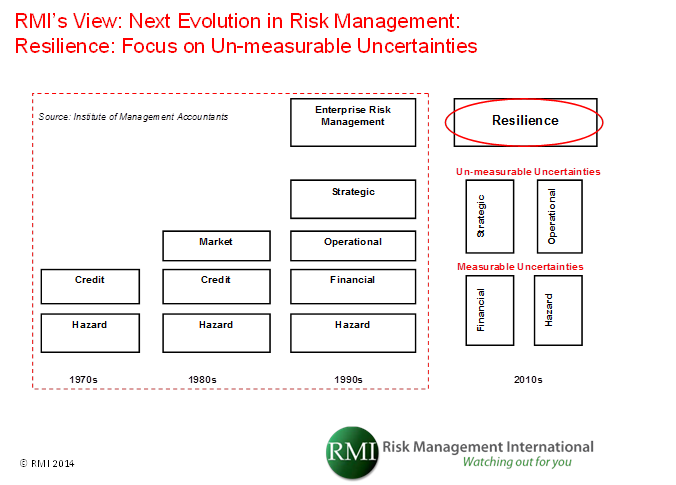

Figure 1 below describes the evolution of risk management as depicted within the red dotted line

11 and the next stage of the evolution (resilience) as envisioned by RMI.

Figure 1: Evolution of risk and the emergence of “resilience” as the current era in the evolution of 21st century understanding of risk

Resilience was the theme that ran through the World Economic Forum: Global Risks 2013, Eight Edition Report.

Resilience was described as capability to

- Adapt to changing contexts,

- Withstand sudden shocks, and

- Recover to a desired equilibrium, either the previous one or a new one, while preserving the continuity of operations.

The three elements in this definition encompass both recoverability (the capacity for speedy recovery after a crisis) and adaptability (timely adaptation in response to a changing environment).

The Global Risks 2013 Report emphasized that global risks do not fit neatly into existing conceptual frameworks but that this is changing insofar as the Harvard Business Review

(Kaplan and Mikes

12) recently published a concise and practical taxonomy that may also be used to consider global risks

13.

The report advises that building resilience against external risks is of paramount importance and alerts directors to the importance of scanning a wider risk horizon than that normally scoped in risk frameworks.

When considering external risks, directors need to be cognizant of the growing awareness and understanding of the importance of emerging risks.

Emerging risks can be internal as well as external, particularly given growing trends in outsourcing core functions and processes.

It is also interesting to observe the diversity in understanding of emerging risk definitions. For example:

- Lloyds: An issue that is perceived to be potentially significant but that may not be fully understood or allowed for in insurance terms and conditions, pricing, reserving or capital setting,

- PWC: Those large-scale events or circumstances beyond one’s direct capacity to control, that have impact in ways difficult to imagine today,

- S&P: Risks that do not currently exist,

The 2014 annual Emerging Risks Survey (a poll of more than 200 risk managers predominantly based at North American re/insurance companies) reported the top five emerging risks as follows:

- Financial volatility (24% of respondents)

- Cyber security/interconnectedness of infrastructure (14%)

- Liability regimes/regulatory framework (10%)

- Blowup in asset prices (8%)

- Chinese economic hard landing (6%)

Maintaining business defense systems capable of defending the business model

has become an additional fiduciary requirement for the board, alongside succession planning and setting strategic direction

15.

References:

1 Influenced by COSO (Committee of Sponsoring Organizations of the Threadway Commission, Enterprise Risk Management (ERM) Understanding and Communicating Risk Appetite, by Dr. Larry Rittenberg and Frank Martens

2 Source: ISO 31000 (Risk Management 2009). ISO 31000 is now the globally accepted risk management standard.

3 The new globally accepted risk management standard (ISO 31000) is not intended for the purposes of certification. Rather, it contains guidance as to risk-management principles, a framework and risk management process that can be applied to any organization, part of an organization or project, etc. As such, it provides an overarching context for the application of domain-specific risk standards and regulations -- for example, Solvency II, environmental risk, supply chain risks, etc.

4 Risk Communication Aligning the Board and C-Suite: Exhibit 1 Top Challenges of Board and Management Risk Communication by Association for Financial Professionals (AFP), the National Association of Corporate Directors (NACD) and Oliver Wyman

5 The Conference Board Governance Centre, Risk Oversight: Evolving Expectations of Board, by Parveen P. Gupta and Tim J Leech

6 An unknown known risk is one that is known, and understood, at one level (e.g. typically top, middle, lower level management) in an organization but not known at the leadership and governance levels (i.e. executive and board levels)

7An unknown unknown risk is a so called black-swan (

The Black Swan: The Impact of the Highly Improbable, Nassim Nicholas Taleb)

8 Specified to the ISO 31000 series

9 Source: ISO 31000 (Risk Management 2009). ISO 31000 is now the globally accepted risk management standard

10 More than 80% of volatility in earnings and financial results comes from the top 10 to 15 high-impact risks facing a company: Risk Communication Aligning the Board and C-Suite, by the Association for Financial Professionals (AFP), the National Association of Corporate Directors (NACD), and Oliver Wyman

11 Source: Institute of Management Accountants, Statements on Management Accounting, Enterprise Risk Management : Frameworks, Elements and Integration

12 Managing Risks: A New Framework

13 Kaplan and Mikes' third category of risk is termed “external” risks, but the Global Risk 2013 report refers to them as “global risks." They are complex and go beyond a company’s scope to manage and mitigate (i.e. they are exogenous in nature).

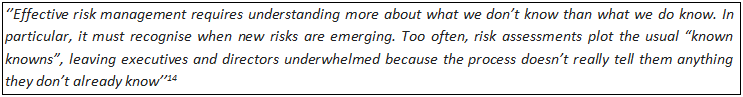

14 Audit and Risk, 21 July 2014, Matt Taylor, Protiviti UK,

15 The Financial Reporting Council has determined that it will integrate its current guidance on going concern and risk management and internal control and make some associated revisions to the UK Corporate Governance Code (expected in 2014). It is expected that emphasis will be placed on the board's making a robust assessment of the principal risks to the company’s business model and ability to deliver its strategy, including solvency and liquidity risks. In making that assessment, the board will be expected to consider the likelihood and impact of these risks materializing in the short and longer term;