How to Prevent IRS Issues for Captives

Domiciles have no responsibility to consider federal tax issues when licensing captives -- but they should do so anyway.

Domiciles have no responsibility to consider federal tax issues when licensing captives -- but they should do so anyway.

Get Involved

Our authors are what set Insurance Thought Leadership apart.

|

Partner with us

We’d love to talk to you about how we can improve your marketing ROI.

|

A product recall can devastate a company's reputation and cut market share -- even if it is handled perfectly and the brand is a great one.

Get Involved

Our authors are what set Insurance Thought Leadership apart.

|

Partner with us

We’d love to talk to you about how we can improve your marketing ROI.

|

Should it really be possible to spend minutes on a continuing education course and get hours of credit? One that's open book? On ethics?

Get Involved

Our authors are what set Insurance Thought Leadership apart.

|

Partner with us

We’d love to talk to you about how we can improve your marketing ROI.

|

Some myths are based on misunderstanding -- some on misinformation spread by those with a vested interest in preserving a flawed system.

Get Involved

Our authors are what set Insurance Thought Leadership apart.

|

Partner with us

We’d love to talk to you about how we can improve your marketing ROI.

|

Bill Minick is the president of PartnerSource, a consulting firm that has helped deliver better benefits and improved outcomes for tens of thousands of injured workers and billions of dollars in economic development through "options" to workers' compensation over the past 20 years.

Work comp claims operations have become a key part of the customer value proposition, so it's crucial to analyze them the right way.

Claims operations have ascended the value chain from an “island in the stream” technical function into a key facet of the customer value proposition. To handle the growing demands, it's important to think about work comp claims in terms of four lanes. The first lane is governed by compliance rules and requires not just compliance awareness, but the knowhow to optimally integrate compliance into the operation. The second lane is focused on vendor management. This needs to go beyond simply outsourcing non-core competencies. Successful companies concentrate on ways to leverage vendors to achieve superior outcomes and competitive advantage. The third lane is defined by business rules. This is where automation is fully deployed and constantly improved. This lane draws from rules-driven facets of each of the other three lanes. The fourth lane is the “interpersonal, interpretative and professional judgment” perspective. It relies on the subjective application of knowledge and human interaction. This lane leverages engagement, training, technology and analytics to continuously accelerate accurate decision making, enhance performance and improve quality. The four lanes represent perspectives and should not be confused with a company’s organogram. Indeed, each lane touches every facet of any organogram found in the insurance industry today. The compliance, vendor management, business-rules and professional judgment lanes all benefit from a strong commitment to business process improvement (BPI). Data capture and analytics that support measurement of performance along the entire claims’ value chain is integral to BPI. The BPI discipline uses data to identify best practices, implement those practices, assess their effectiveness and uncover opportunity for further improvement. Embracing the four-lane view and BPI model will help carriers make strong, data-based decisions as they reconfigure their claims departments to control costs, stabilize case reserving and improve outcomes of their claims operations. Great tools, talented people and sound business practices are the timeless ingredients of success, as is operational adaptivity. Today’s workers’ compensation carriers are operating in an environment of increased uncertainty and complexity. Carriers face headwinds because of a shift into a healthcare-centric business, which has caught many carriers flat-footed. Medical costs are approaching 70% of the total claims spending in many jurisdictions. The utilization and cost of pharmaceuticals is rising at a rapid rate. According to the California Workers’ Compensation Institute, pharmacy and home-medical-equipment costs have risen by more than 250% since 2004. Today’s companies must adapt their models to concentrate on effective and efficient delivery of care that improves patient outcomes, exudes customer value and underpins superior combined ratios. The undeniable reality is that the nature of work comp claims has changed. Traditional ideas on the core competencies necessary to operate an effective claims operation need to be challenged and adjusted. Positive differentiation and sustainable market leadership depend on effectively incorporating the ingredients of success into a well-defined strategy that produces desired results and provides an agile framework for continual business evolution.

Get Involved

Our authors are what set Insurance Thought Leadership apart.

|

Partner with us

We’d love to talk to you about how we can improve your marketing ROI.

|

Hale Johnston formed Hale Strategic Consulting to help organizations navigate and thrive in an increasingly competitive environment. During his 20-plus-year career in insurance, he has led every facet of the workers’ compensation insurance value chain.

A single view of the customer is now paramount. Three questions must be addressed.

Get Involved

Our authors are what set Insurance Thought Leadership apart.

|

Partner with us

We’d love to talk to you about how we can improve your marketing ROI.

|

Mark Breading is a partner at Strategy Meets Action, a Resource Pro company that helps insurers develop and validate their IT strategies and plans, better understand how their investments measure up in today's highly competitive environment and gain clarity on solution options and vendor selection.

The North Atlantic hurricane season has begun, and CAT models need to be updated to remove the possibility of major losses.

Get Involved

Our authors are what set Insurance Thought Leadership apart.

|

Partner with us

We’d love to talk to you about how we can improve your marketing ROI.

|

Cheryl Fanelli delivers best-in-class analytics for modeling catastrophe exposures for clients. As manager of Marsh's CAT Modeling Center of Excellence, Fanelli and her team work closely with consultants and brokers to review, analyze and model client property data against models of historical or potential catastrophic events.

The workers' comp process is like my golf game. Both start out well enough but then go sour, and every "fix" just makes things worse.

Get Involved

Our authors are what set Insurance Thought Leadership apart.

|

Partner with us

We’d love to talk to you about how we can improve your marketing ROI.

|

Bob Wilson is a founding partner, president and CEO of WorkersCompensation.com, based in Sarasota, Fla. He has presented at seminars and conferences on a variety of topics, related to both technology within the workers' compensation industry and bettering the workers' comp system through improved employee/employer relations and claims management techniques.

The hack makes clear that we won't solve the crisis if we just keep doing what we're doing. We have to start asking better questions.

Get Involved

Our authors are what set Insurance Thought Leadership apart.

|

Partner with us

We’d love to talk to you about how we can improve your marketing ROI.

|

Adam K. Levin is a consumer advocate and a nationally recognized expert on security, privacy, identity theft, fraud, and personal finance. A former director of the New Jersey Division of Consumer Affairs, Levin is chairman and founder of IDT911 (Identity Theft 911) and chairman and co-founder of Credit.com .

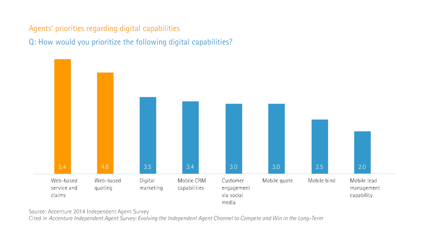

An Accenture survey finds low interest in technology among independent agents, which could impede their ability to provide exceptional service.

Given customers’ changing expectations for how service is delivered, this disconnect—between IAs’ intent to focus on their customers and their lower regard for omni-channel capabilities—could impede IAs’ ability to continue to offer exceptional customer service. Mobile and social media capabilities, in particular, could help IAs offer the tailored, responsive experience that many customers have come to expect and demand.

Learn more:

Given customers’ changing expectations for how service is delivered, this disconnect—between IAs’ intent to focus on their customers and their lower regard for omni-channel capabilities—could impede IAs’ ability to continue to offer exceptional customer service. Mobile and social media capabilities, in particular, could help IAs offer the tailored, responsive experience that many customers have come to expect and demand.

Learn more:

Get Involved

Our authors are what set Insurance Thought Leadership apart.

|

Partner with us

We’d love to talk to you about how we can improve your marketing ROI.

|

Michael Costonis is Accenture’s global insurance lead. He manages the insurance practice across P&C and life, helping clients chart a course through digital disruption and capitalize on the opportunities of a rapidly changing marketplace.