Cyber: Black Hole or Huge Opportunity?

Are companies not interested in buying, or is the insurance market failing to deliver the necessary protection for cyber today?

Are companies not interested in buying, or is the insurance market failing to deliver the necessary protection for cyber today?

Get Involved

Our authors are what set Insurance Thought Leadership apart.

|

Partner with us

We’d love to talk to you about how we can improve your marketing ROI.

|

Matthew Grant is the CEO of Instech, which publishes reports, newsletters, podcasts and articles and hosts weekly events to support leading providers of innovative technology in and around insurance.

While wearables and apps are associated with promoting physical fitness, technology is increasingly used in lifestyle monitoring of the elderly.

Get Involved

Our authors are what set Insurance Thought Leadership apart.

|

Partner with us

We’d love to talk to you about how we can improve your marketing ROI.

|

Ross Campbell is chief underwriter, research and development, based in Gen Re’s London office.

Because of self-driving, KPMG predicts that auto insurance will shrink 60% by 2050 and an additional 10% over the following decade.

While more than half of individuals surveyed by Pew Research express worry over the trend toward autonomous vehicles, and only 11% are very enthusiastic about a future of self-driving cars, lack of positive consumer sentiment hasn’t stopped several industries from steering into the auto pilot lane.

The general sentiment of proponents, such as Tesla and Volvo, is that consumers will flock toward driverless transportation once they understand the associated safety and time-saving benefits. Because of the self-driving trend, KPMG currently predicts that the auto insurance market will shrink 60% by the year 2050 and an additional 10% over the following decade.

What this means for P&C insurers is change in the years ahead. A decline in individual drivers would directly correlate to a reduction in demand for the industry’s largest segment of coverage. How insurers survive will depend on several factors, including steps they take now to meet consumer expectations and needs.

The Rise of Autonomous Vehicles

Google’s Lexus RX450h SUV, as well as 34 other prototype vehicles, had driven more than 2.3 million autonomous miles as of November 2016, the last time the company published its once monthly report on the activity of its driverless car program. Based on this success and others from companies such as Tesla, public transportation now seems poised to jump into the autonomous lane.

Waymo -- the Google self-driving car project -- recently announced a partnership with Valley Metro to help residents in Phoenix, AZ, connect more efficiently to existing light rail, trains and buses by providing driverless rides to stations. This follows closely on the heels of another Waymo pilot program that put self-driving trucks on Atlanta area streets to transport goods to Google’s data centers.

In the world of personal driving, Tesla’s Auto Pilot system was one of the first to take over navigational functions, though it still required drivers to have a hand on the wheel. In 2017, Cadillac released the first truly hands-free automobile with its Super Cruise-enabled CT6, allowing drivers to drive without touching the wheel for as long as they traveled in their selected lane.

Cadillac’s level two system of semiautonomous driving is expected to be quickly upstaged by Audi’s A8. Equipped with Traffic Jam Pilot, the system allows drivers to take hands off the vehicle and eyes off the road as long as the car is on a limited-access divided highway with a vehicle directly in front of it. While in Traffic Jam mode, drivers will be free to engage with the vehicle’s entertainment system, view text messages or even look at a passenger in the seat next to them, as long as they remain in the driver’s seat with body facing forward.

While the Cadillacs were originally set to roll off the assembly line and onto dealer lots as early as spring of 2018, lack of consumer training as well as federal regulations have encouraged the auto manufacturer to delay release in the U.S. Meanwhile, Volvo has met with similar constraints as it navigates toward releasing fully autonomous vehicles to 100 people by 2021. The manufacturer is now taking a more measured approach, one that includes training for drivers starting with level-two semi-autonomous assistance systems before eventually scaling up to fully autonomous vehicles.

“On the journey, some of the questions that we thought were really difficult to answer have been answered much faster than we expected. And in some areas, we are finding that there were more issues to dig into and solve than we expected,” said Marcus Rothoff, Volvo’s autonomous driving program director, in a statement to Automotive News Europe.

Despite the roadblocks, auto makers’ enthusiasm for the fully autonomous movement hasn’t waned. Tesla’s Elon Musk touts safer, more secure roadways when cars are in control, a vision that is being embraced by others in high positions, such as Elaine Chao, U.S. Secretary of Transportation.

“Automated or self-driving vehicles are about to change the way we travel and connect with one another,” Chao said to participants of the Detroit Auto Show in January 2018. “This technology has tremendous potential to enhance safety.”

See also: The Evolution in Self-Driving Vehicles

We’ve already seen what sensors can do to promote safer driving. In a recent study conducted by the International Institute for Highway Safety, rear parking sensors bundled with automatic braking systems and rearview cameras were responsible for a 75% reduction in backing up crashes. According to Tesla’s website, all of its Model S and Model X cars are equipped with 12 ultrasonic sensors capable of detecting both hard and soft objects, as well as with cameras and radar that send feedback to the car.

Caution, Autonomous Adoption Ahead

The road to fully autonomous vehicles is expected to be taken in a series of increasing steps. We have largely entered the first phase, where drivers are still in charge, aided by various safety systems that intervene in the case of driver error. As we move closer to full autonomy, drivers will assume less control of the vehicle and begin acting as a failsafe for errant systems or by taking over under conditions where the system is not designed to navigate. We currently see this level of autonomous driving with Audi Traffic Jam Pilot, where drivers are prompted to take control if the vehicle departs from the pre-established roadway parameters. In the final phase of autonomous driving, the driver is removed from controlling the vehicle and is absolved of roadway responsibility, putting all trust and control in the vehicle.

KPMG predicts wide-scale adoption of this level of autonomous driving to begin taking place in 2025, as drivers realize the time-saving and safety benefits of self-driving vehicles. During this time frame, all new vehicles will be fully self-driving, and older cars will be retrofitted to conform to a road system of autonomous vehicles.

Past the advent of the autonomous trend in 2025, self-driving cars will become the norm, with information flowing between vehicles and across a network of related infrastructure sensors. KPMG expects full adoption of the autonomous trend by the year 2035, five years earlier than it first reported in 2015.

Despite straightforward predictions like these, it’s likely that drivers will adopt self-driving cars at varying rates, with some geographies moving faster toward driverless roadways than others. There will be points in the future where a major metropolis may have moved fully to a self-driving norm, mandating that drivers either purchase and use fully autonomous vehicles or adopt autonomous public transportation, while outlying areas will still be in a phase where traditional vehicles dominate or are in the process of being retrofitted.

“The point at which we see autonomy appear will not be the point at which there is a massive societal impact on people,” said Elon Musk, Tesla CEO, at the World Government Summit in Dubai in 2017. “Because it will take a lot of time to make enough autonomous vehicles to disrupt, so that disruption will take place over about 20 years.”

Will Self-Driving Cars Force a Decline in Traditional Auto Coverage?

At present, data from the National Highway Traffic Safety Administration indicates that 94% of automobile accidents are the result of human error. Taking humans largely out of the equation makes many autonomous vehicle proponents predict safer roadways in our future, but it also raises an interesting question. Who is at fault when a vehicle driving in autonomous mode is involved in a crash?

Many experts agree that accident liability will be taken away from the driver and put into the hands of the automobile manufacturers. In fact, precedents are already being set. In 2015, Volvo announced plans to accept fault when one of its autonomous cars is involved in an accident.

“It is really not that strange,” Anders Karrberg, vice president of government affairs at Volvo, told a House subcommittee recently. “Carmakers should take liability for any system in the car. So we have declared that if there is a malfunction to the [autonomous driving] system when operating autonomously, we would take the product liability.”

In the future, as automobile manufacturers take on liability for vehicle accidents, consumers may see a chance to save on their auto premiums by only carrying state-mandated minimums. Some states may even be inclined to repeal laws requiring drivers to carry traditional liability coverage on self-driving vehicles or substantially alter the coverage an individual must secure.

Despite the forward thinking of manufacturers such as Volvo, for the present, accident liability for autonomous cars is still a gray area. Following the death of a pedestrian hit by an Uber vehicle operating in self-driving mode in Arizona, questions were raised over liability.

Bryant Walker Smith, a law professor at the University of South Carolina with expertise in self-driving cars, indicated that most states require drivers to exercise care to avoid pedestrians on roadways, laying liability at the feet of the driver. But in the case of a car operating in self-driving mode, determining liability could hinge on whether there was a design defect in the autonomous system. In this case, both the auto and self-driving system manufacturers and even the software developers could be on the hook for damages, particularly in the event a lawsuit is filed.

Finding Opportunity in the Self-Driving Trend

Accenture, in conjunction with Stevens Institute of Technology, predicts that 23 million self-driving vehicles will be coursing across U.S. highways by 2035. As a result, insurers could realize an $81 billion opportunity as autonomous vehicles open new areas of coverage in hardware and software liability, cybersecurity and public infrastructure insurance by 2025, the same year that KPMG predicts the autonomous trend will begin to rapidly accelerate.

Simultaneously, Accenture predicts that personal auto premiums, which will begin falling in 2024, will hit a steeper decline before leveling out around 2050 at an all-time low. Most of the personal premium decline is due to an assumption that the majority of self-driving cars will not be owned by individuals, but by original equipment manufacturers, OTT players and other service providers such as ride-sharing companies.

It may seem like a logical conclusion if America’s love affair with the automobile wasn’t so well-defined. Following falling gas prices in 2016, Americans logged a record-breaking 3.22 trillion miles behind the wheel. Even millennials, the age group once assumed to have given up on driving, are showing increased interest in piloting their own vehicles as the economy improves.

According to the National Household Travel Survey conducted by the Federal Highway Administration, millennials increased their average number of miles driven 20% from 2009 to 2017. Despite falling new car sales, the University of Michigan Transportation Research Institute shows that car ownership is actually on the rise. Eighteen percent of Americans purchase a new car every two to three years, while the majority (39%) make a new car bargain every four to six years.

Americans have many reasons for loving their vehicles. Forty percent say it’s because they enjoy driving and being in their cars, according to a survey conducted by Cars.com. ReportLinker reveals that 83% of people drive daily and that half are passionate about the behind-the-wheel experience of taking on the open road. Another survey conducted by Gold Eagle determined that people even have dream cars, vehicles that they feel convey a sporty, luxurious or efficient image.

Ownership of autonomous vehicles would bring at least some liability back to the owner-occupant. For instance, owing to security concerns, all sensing and decision-making hardware related to the Audi Traffic Jam Pilot system is held onboard. With no over-air connections, software updates must be made manually through a dealer. In situations like these, what happens if an autonomous vehicle crash is tied to the driver’s failure to ensure that software was promptly updated?

Auto maintenance will also take on a new level of importance as sensitive self-driving systems will need to be maintained and adjusted to ensure proper performance. If an accident occurs due to improper vehicle maintenance, once again, the owner could be held liable.

As the U.S. moves toward autonomous car adoption, one thing becomes clear. Insurers will need to expand their product lines to include both commercial and personal lines of coverage if they are going to take part in the multibillion-dollar opportunity.

Preparing for the Autonomous Future of Insurance

Because the autonomous trend will be adopted at an uneven pace depending upon geography, socioeconomic conditions and even age groups, Deloitte predicts that the insurers that will thrive through the autonomous disruption are those with a “flexible business model and diverse product mix.” To meet consumer expectations and maintain a critical focus on customer acquisition and retention, insurers will need a multitude of products designed to protect drivers across the autonomous adoption cycle, as well as new products designed to cover the shift of liability from driver to vehicle.

Even traditional auto policies designed to protect car owners from liability will need to be redefined to cover autonomous parameters. Currently, only 25% of companies have a business model that is easily adaptable to rapid change, such as the autonomous trend.

In insurance, this lack of readiness is all the more crucial, considering the digital transformation already underway across the industry. According to PwC, 85% of insurance CEOs are concerned about the speed of technological change. Worries over how to handle legacy systems in the face of digital adoption, as well as the need to accelerate automation and prepare for the next wave of transitions, such as autonomous vehicles, are behind these concerns.

As insurers look toward the complicated future of insuring a society of self-driving automobiles, we believe that focusing on four main areas will prepare them to respond to the autonomous trend with greater speed and agility.

Make better use of data

Consumers are looking for insurers to partner on risk mitigation. To meet these expectations, insurers will need to start making better use of data stores, as well as third-party sources, to help customers identify and reduce threats to life and property. Sixty-four percent want their insurer to provide real-time notifications about roadway safety, while, on the home front, 68% would like to receive mobile alerts on the potential of fire, smoke or carbon dioxide hazards.

“Technology is changing the insurer’s role to one of a partner who can address the customer’s real goals – well beyond traditional insurance,” said Cindy De Armond, managing director, Accenture P&C core platforms lead for North America, in a blog.

Armond believes that as insurers focus more on the customer’s prevention and recovery needs, they can become the everyday insurer, integrated into the lives of their customers rather than acting only as a crisis partner. This type of relationship makes insurer-insured relationships more certain and extends longevity. For insurers and their insureds, the future is likely to be more about predicting and mitigating risk than about handling claims, so improving data capture and analytics capabilities is essential to agile operations that can easily adapt to new trends.

See also: Autonomous Vehicles: ‘The Trolley Problem’

Focus on digital Consumers want to engage with their insurer in the moment. Whether that means shopping online for coverage while watching a child’s soccer game or making a phone call to ask questions about a policy, they expect to be able to engage on their time and through their channel of choice. Insurers that develop fluid omni-channel engagement now are future-proofing their operations, preparing to survive the evolution to self-driving, when the reams of data gathered from autonomous vehicles can be used to enable on-demand auto coverage.

Vehicle occupants will one day purchase coverage on the fly, depending on the roadway conditions they encounter and whether they are traveling in autonomous mode. Forrester analyst Ellen Carney sees a fluid orchestration of data and digital technologies combining to deliver this type of experience, putting much of the power in the hands of the customer.

“On your way home, you’re going to get a quote for auto insurance,” she says. “And because your driving data could basically now be portable, you could do a reverse auction and say, ‘Okay, insurance companies, how much do you want to bid for my drive home?’” To facilitate the speed and immediacy required for these transactions, insurers will need to digitally quote, bind and issue coverage.

Seek automation

In the U.K., accident liability clearly shifts from the driver to the vehicle for level four and five autonomous automobiles. As driverless vehicles become the norm, the U.S. is likely to adopt similar legislation, requiring a fundamental shift in how risk is assessed and insurance policies are underwritten. Instead of assessing a policy on the driver’s claims history and age, insurers will need to rate risk by variables related to the software that runs the vehicle and how likely owners are to maintain autonomous cars and sensitive self-driving systems. The more complicated underwriting becomes, the more important automation in underwriting will be.

Consumers who can get into a car that drives itself will have little patience for insurers that require extensive manual work to assess their risk and return bound policy documents. Even businesses will come to expect a much faster turnaround on policies related to self-driving vehicles despite the complexity of the various coverages that will be required. In addition, on-demand coverage will require automated underwriting to respond to customer requests.

According to Lexis Nexis, only 20% of commercial carriers have automated the quoting process, and less than half are investing in underwriting automation.

Invest in platform ecosystems

McKinsey defines a platform business model as one that allows multiple participants to “connect, interact and create and exchange value,” while an ecosystem is a set of connected services that fulfill multiple needs of the user in “one integrated experience.” By definition, an insurance platform ecosystem in the age of autonomous vehicles would be a place where consumers and businesses could research and purchase the coverage they need while also picking up related ancillary services, such as apps or entertainment to make the autonomous ride more enjoyable.

Consumers are in search of ecosystem values today. According to Bain’s customer behavior and loyalty study, consumers are willing to pay higher premiums to insurers that offer ancillary services, such as home security monitoring or an automotive services app, and they are even willing to switch insurers to get time-saving benefits like these.

More important to insurers is the ability to partner with other carriers on coverage. Using a commission-based system, insurers offer policies from other carriers to consumers when they don’t have an appetite for the risk or don’t offer the coverage in house. This arrangement allows an insurer to maintain a customer relationship, while providing for their needs and price points.

See also: Autonomous Vehicles: Truly Imminent?

As the autonomous trend reaches fruition, insurers will need to have access to a wide range of coverage types to meet consumer and business needs, and not all carriers will be able or want to create the new products.

Extreme Customer Focus Prepares for the Future

Insurers can prepare for autonomous vehicle adoption by establishing an extreme customer focus, dedicated to establishing enduring loyalty as insurance needs change. Loyal customers spend 67% more over three years than new ones.

As the insurance marketplace opens up to the sale of ancillary services, gaining wallet share from loyal consumers will certainly help to boost revenues as demand for traditional products decline, but to stay competitive, insurers will need a broader mix of coverage types. While current coverages have remained largely unchanged over the decades, the coming years will see an industry in flux as insurers phase out outmoded types of coverage while phasing in new products and services.

In this environment, the platform ecosystems may be the most critical aspect of bridging the gaps. Today, they allow insurers to fulfill the needs of price-sensitive consumers while also meeting the evolving needs of their customers. Tomorrow, platform ecosystems will provide the “flexible business model and diverse product mix” that Deloitte says will be critical to success for insurers in the autonomous age of driving.

Get Involved

Our authors are what set Insurance Thought Leadership apart.

|

Partner with us

We’d love to talk to you about how we can improve your marketing ROI.

|

Tom Hammond is the chief strategy officer at Confie. He was previously the president of U.S. operations at Bolt Solutions.

A memorandum of understanding (MOU) can be an invaluable, interim tool in mediation in workers' compensation cases.

Get Involved

Our authors are what set Insurance Thought Leadership apart.

|

Partner with us

We’d love to talk to you about how we can improve your marketing ROI.

|

Teddy Snyder mediates workers' compensation cases throughout California through WCMediator.com. An attorney since 1977, she has concentrated on claim settlement for more than 19 years. Her motto is, "Stop fooling around and just settle the case."

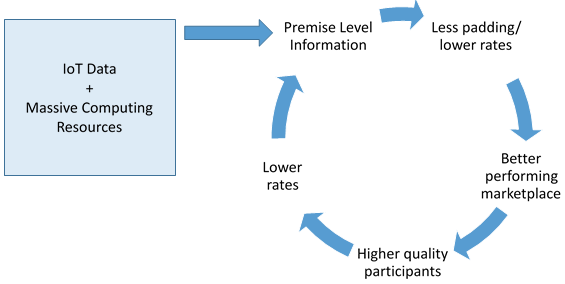

An enhanced view into personalized data may be one of the most interesting opportunities in the insurance market to date.

I recognize I am ignoring huge hurdles for this type of transparency: regulatory constraints, privacy issues, consumer interest, etc., but I do feel strongly that early entrants into these types of products may see very interesting results. Basically, better information becomes the great equalizer…

Conclusion

New, high-resolution data sets along with the computing power needed to make them useful are finally here. While having this added information doesn’t necessarily serve as the silver bullet to perfect rate modeling, it certainly offers insurers an opportunity to refine their analysis and reduce the guesswork. Obviously, the effort to operationalize these new data sets may be significant, and, as noted above, there are certainly consumer and regulatory concerns as this highly personal data is used, but the potential is certainly compelling to consider. At the least, now is the time to start considering where these data sets would be useful as the industry contemplates a move toward highly individualized risk opportunities.

I recognize I am ignoring huge hurdles for this type of transparency: regulatory constraints, privacy issues, consumer interest, etc., but I do feel strongly that early entrants into these types of products may see very interesting results. Basically, better information becomes the great equalizer…

Conclusion

New, high-resolution data sets along with the computing power needed to make them useful are finally here. While having this added information doesn’t necessarily serve as the silver bullet to perfect rate modeling, it certainly offers insurers an opportunity to refine their analysis and reduce the guesswork. Obviously, the effort to operationalize these new data sets may be significant, and, as noted above, there are certainly consumer and regulatory concerns as this highly personal data is used, but the potential is certainly compelling to consider. At the least, now is the time to start considering where these data sets would be useful as the industry contemplates a move toward highly individualized risk opportunities.

Get Involved

Our authors are what set Insurance Thought Leadership apart.

|

Partner with us

We’d love to talk to you about how we can improve your marketing ROI.

|

David Wechsler has spent the majority of his career in emerging tech. He recently joined Comcast Xfinity, focused on helping drive the adoption of Internet of Things (IoT), in particular with insurance, energy and smart home/home automation.

A job and career tailored for veterans and their individual skills and abilities allows them every chance for a thriving post-military life.

Get Involved

Our authors are what set Insurance Thought Leadership apart.

|

Partner with us

We’d love to talk to you about how we can improve your marketing ROI.

|

Sally Spencer-Thomas is a clinical psychologist, inspirational international speaker and impact entrepreneur. Dr. Spencer-Thomas was moved to work in suicide prevention after her younger brother, a Denver entrepreneur, died of suicide after a battle with bipolar condition.

David Maron, M. A., M.H.S. is a biostatistician, public health researcher and consultant with nearly a decade of experience working with electronic medical record system data and leading national data-sharing initiatives to promote mental health in veteran populations.

Jason Field is a U.S. Air Force veteran of seven years. Field is currently pursuing his licensure in professional counseling and has focused his research on married couples and families.

The use of scooters--and number of accidents--is going to increase, creating significant risk. So, what are the insurance implications?

Get Involved

Our authors are what set Insurance Thought Leadership apart.

|

Partner with us

We’d love to talk to you about how we can improve your marketing ROI.

|

William C. Wilson, Jr., CPCU, ARM, AIM, AAM is the founder of Insurance Commentary.com. He retired in December 2016 from the Independent Insurance Agents & Brokers of America, where he served as associate vice president of education and research.

To get a sense of which companies are innovating well, and which aren't, ITL conducted a major study with our friends at The Institutes, and ITL Chief Innovation Officer Guy Fraker lays out the findings in the second part of his three-part series on innovation. He reports that a growing number of insurers, especially the larger ones, have cleared the first hurdle: They see the need for innovation and have made it a priority. But he also spots problems, based on the survey, based on his extensive consulting work and based on the more than 1,000 hours of interviews that we conducted with executives.

The problems lie on the people side. Too few companies have appointed the sort of small, centralized team that needs to drive innovation. Vanishingly few seem to have figured out how to draw on the resources available to them across the breadth of their operations and may have put themselves in a vicious circle. Companies don't expect their people to be innovative and don't offer rewards for innovation, so people don't offer innovative ideas, which leads their bosses not to trust their ability, which....

As usual, Guy also busts some myths about innovation. Two struck me as especially important:

I encourage you to read the whole piece. I think you'll get a lot out of it.

Have a great week.

Paul Carroll

Editor-in-Chief

Get Involved

Our authors are what set Insurance Thought Leadership apart.

|

Partner with us

We’d love to talk to you about how we can improve your marketing ROI.

|

Paul Carroll is the editor-in-chief of Insurance Thought Leadership.

He is also co-author of A Brief History of a Perfect Future: Inventing the Future We Can Proudly Leave Our Kids by 2050 and Billion Dollar Lessons: What You Can Learn From the Most Inexcusable Business Failures of the Last 25 Years and the author of a best-seller on IBM, published in 1993.

Carroll spent 17 years at the Wall Street Journal as an editor and reporter; he was nominated twice for the Pulitzer Prize. He later was a finalist for a National Magazine Award.

A major earthquake in the U.S. will destroy billion of dollars in collateral for Fannie Mae and Freddie Mac and leave taxpayers on the hook.

Get Involved

Our authors are what set Insurance Thought Leadership apart.

|

Partner with us

We’d love to talk to you about how we can improve your marketing ROI.

|

Automated portals have become popular but can be ungainly and unresponsive. A movement back toward agencies has begun.

Get Involved

Our authors are what set Insurance Thought Leadership apart.

|

Partner with us

We’d love to talk to you about how we can improve your marketing ROI.

|