In programming circles, there is an aphorism known as the “90-90 rule.” It states that the first 90% of code accounts for the first 90% of the expected development time—and the remaining 10% of code takes another 90% of time. The rule is a tongue-in-cheek acknowledgement that technology projects always take longer than you expect, even when you know that they are going to take longer than you expect.

Sacha Arnoud, director of engineering at Waymo, recently used a variant of the 90-90 rule to characterize Waymo’s self-driving car program. Waymo’s experience, he said, was that the first 90% of the technology took only 10% of the time. To finish the last 10%, however, is requiring 10x the initial effort.

Arnoud’s remarks were given at a guest lecture at

Lex Fridman's MIT class on “Deep Learning for Self-Driving Cars.” He offered technical insights on the history of the Waymo program, how it is applying artificial intelligence and deep learning and how it is moving from demo to industrial-strength product.

The Waymo engineer’s lecture goes beyond most Waymo management presentations and press events. He provides vivid details on the complexity of the effort to date and insight on challenges to come—both for Waymo and for those trying to catch up to its pioneering efforts.

Here are 5 takeaways, though

I recommend watching the entire presentation.

1. Industrialization requires 10x the effort.

Arnoud emphasized the large amount of work needed to go from a demo that works in a lab to an industrialized product that is safe to put on the road: “You need to 10x the capabilities of your technology. You need to 10x your team size, including finding effective ways for more engineers and more researchers to collaborate. You need to 10x the capabilities of your sensors. You need to 10x the overall quality of the system, including your testing practices.”

2. Deep learning enabled algorithmic breakthroughs.

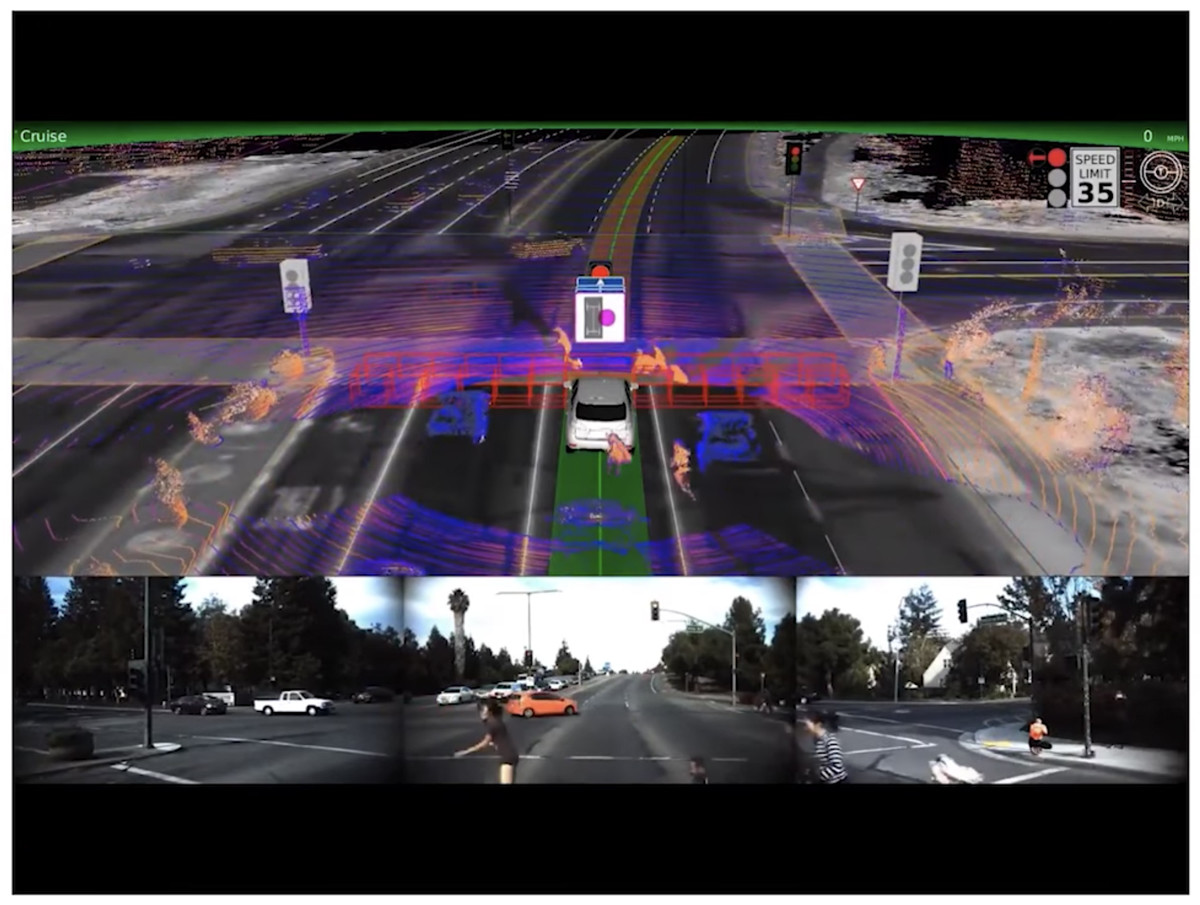

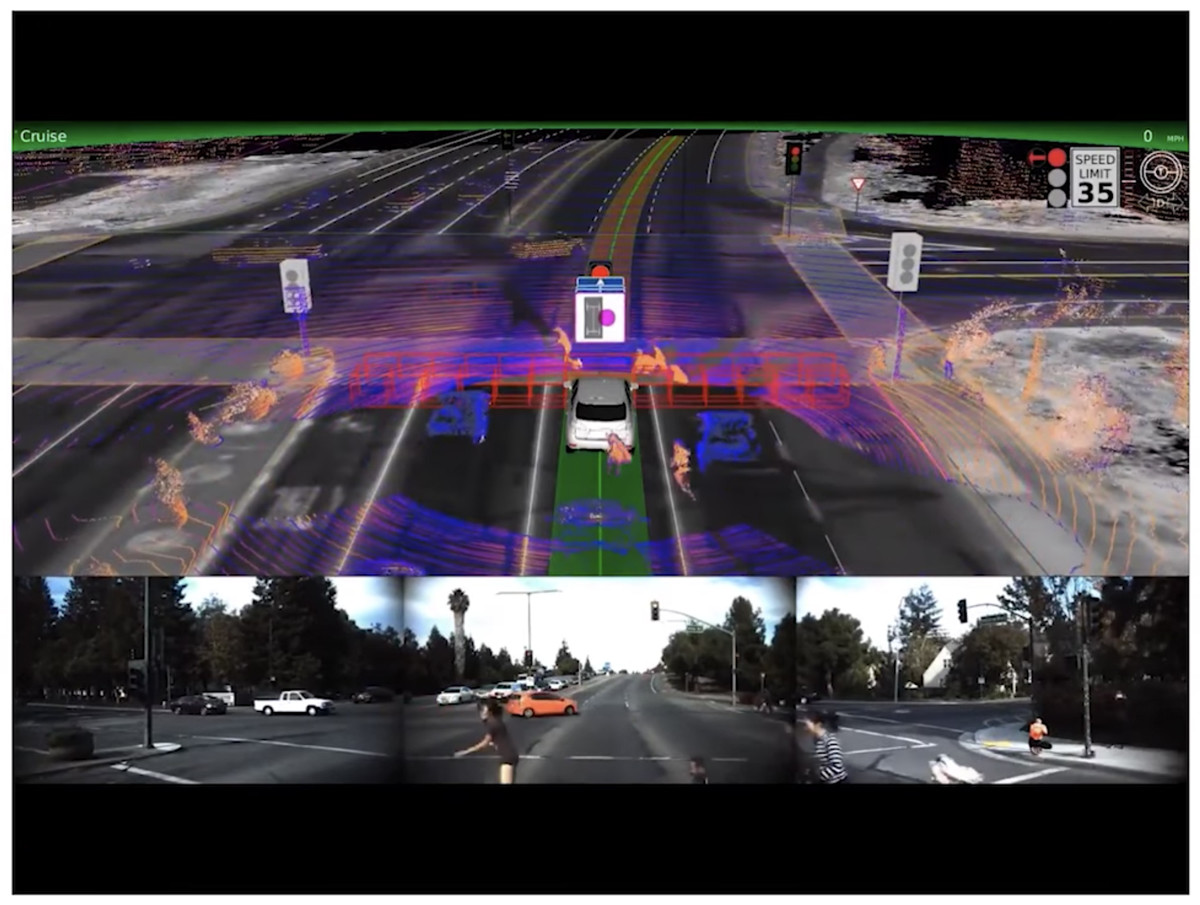

Arnoud noted that deep learning techniques were much less advanced in 2010 when Google started its work on self-driving cars. But, in the years since, deep learning has advanced to enable algorithmic breakthroughs in several critical areas for autonomous driving, including mapping, perception and scene understanding.

Arnoud gave numerous examples, such as using deep learning to analyze street imagery to extract street names, house numbers, traffic lights and traffic signs. The ability to precompute such data and store them as maps in the car saves precious onboard computing power for real time tasks.

See also: When Will the Driverless Car Arrive?

Deep learning is driving breakthroughs in real-time tasks as well, such as analyzing sensor data to identify traffic signals, other vehicles, obstacles, pedestrians, and so on. Deep learning capabilities also help in anticipating possible behavior of other drivers, cyclists and pedestrians, and driving accordingly.

3. Synergy with other Google units is key to Waymo’s progress.

3. Synergy with other Google units is key to Waymo’s progress.

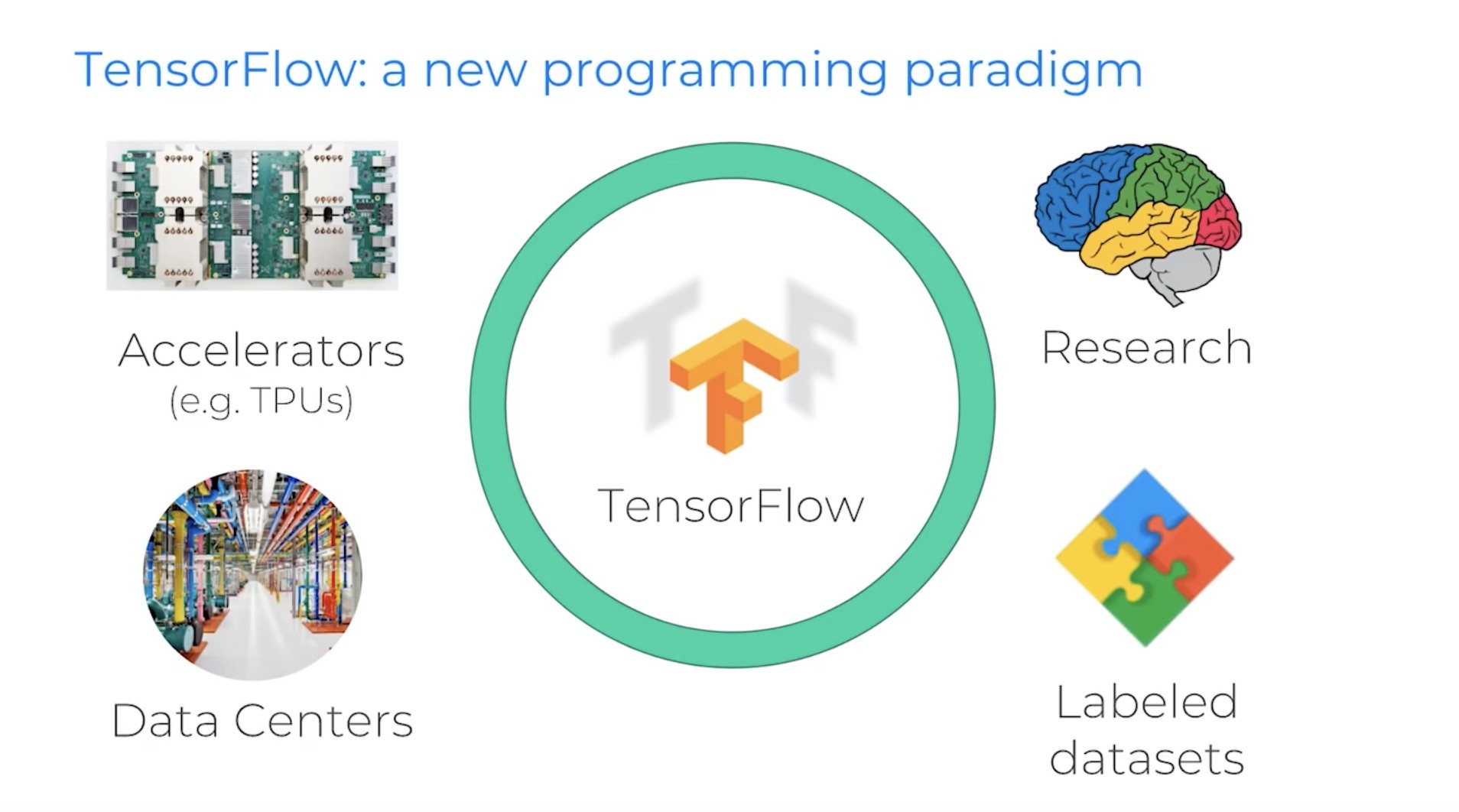

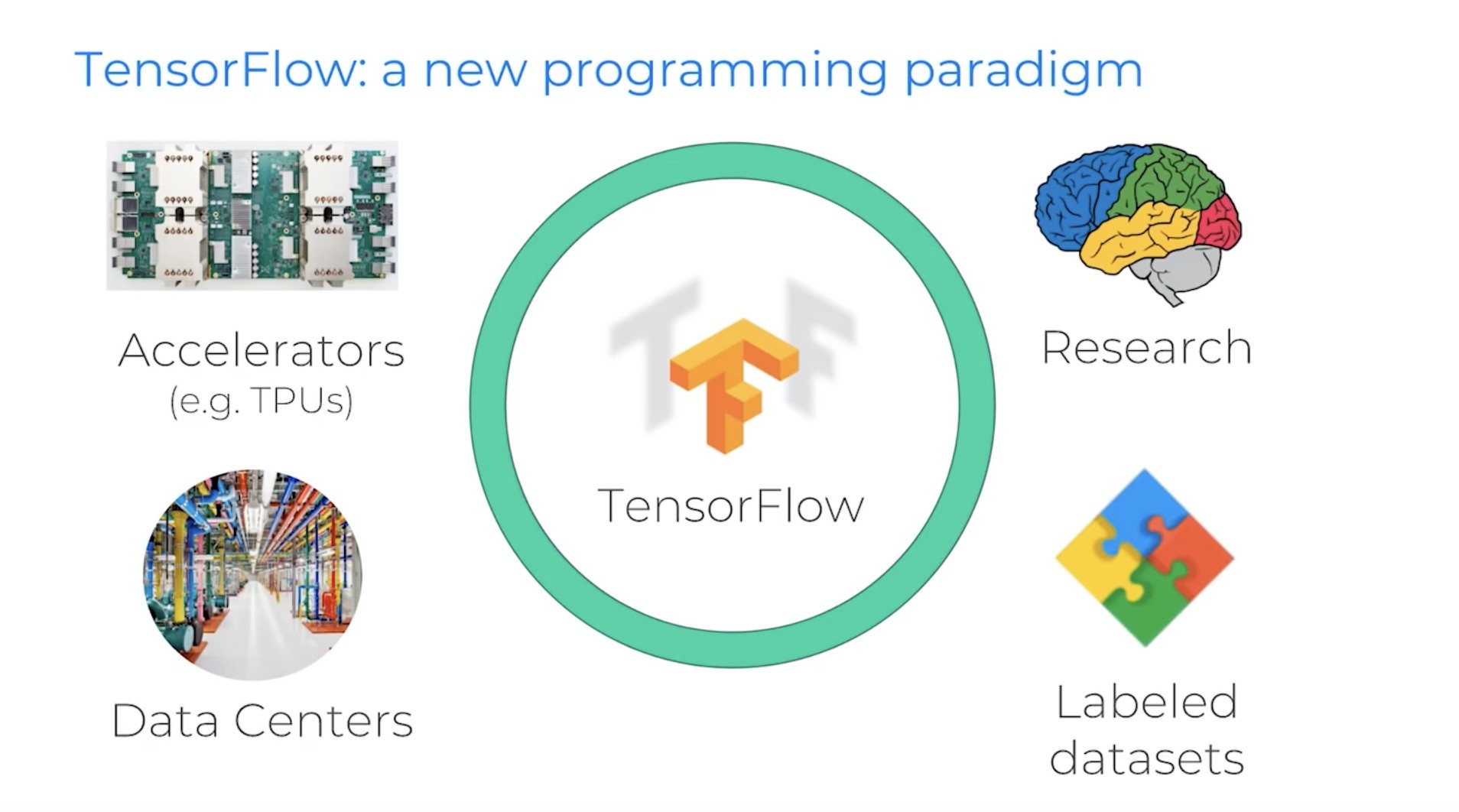

Arnoud acknowledged the importance of Google’s “whole machine learning ecosystem” to Waymo’s progress. This includes the seminal software advances by the Google Brain team and on-going collaboration with other Google teams working on deep learning at scale, such as in vision, speech, natural language processing and maps. The Google ecosystem also provides specialized infrastructure and tools for machine learning. This includes accelerators, data centers, labelled datasets and research that support Google’s TensorFlow programming paradigm.

4. Waymo’s testing program might be its secret sauce.

Arnoud emphasized that however great Waymo’s algorithms, sensors and overall package might be, driverless cars are still complex, embedded, real-time robotic systems that must work safely with imperfect data in an unpredictable world. He highlighted Waymo’s three-prong testing program of real-world driving, simulation and structured testing as key to iterating on and productizing the technology.

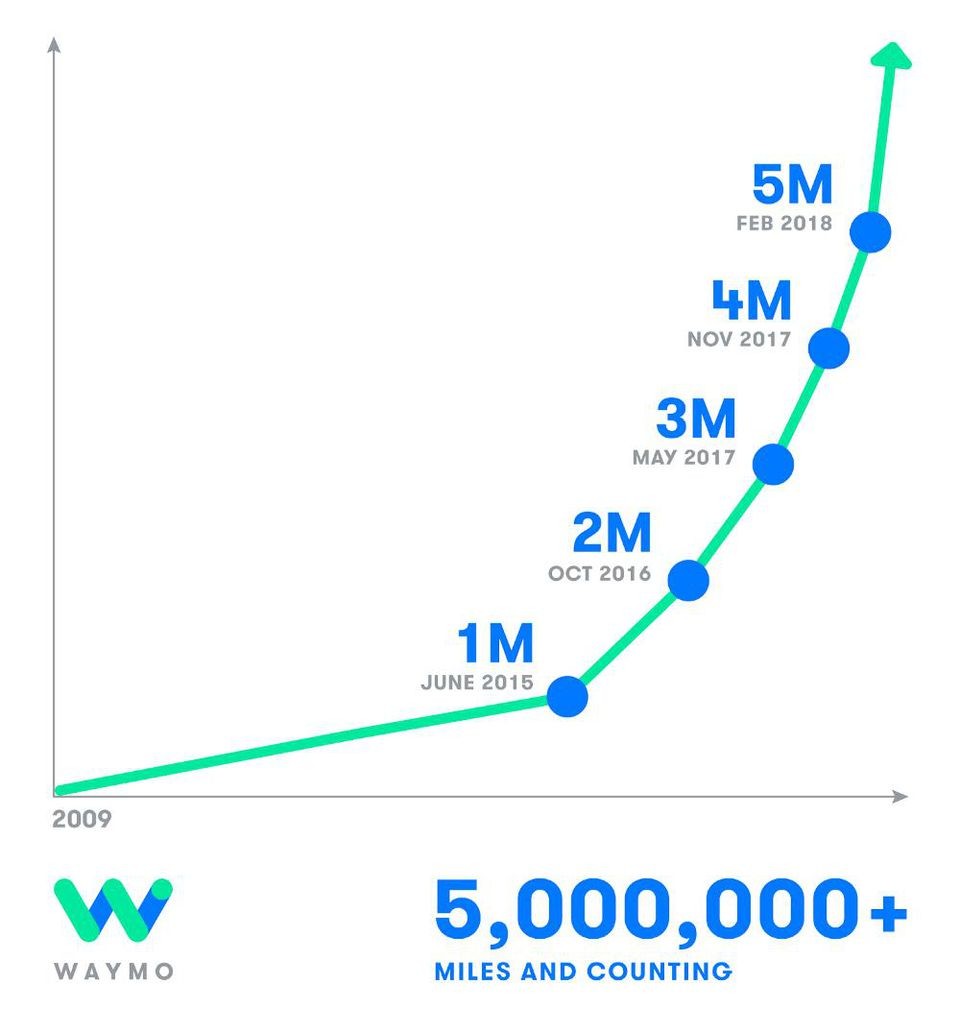

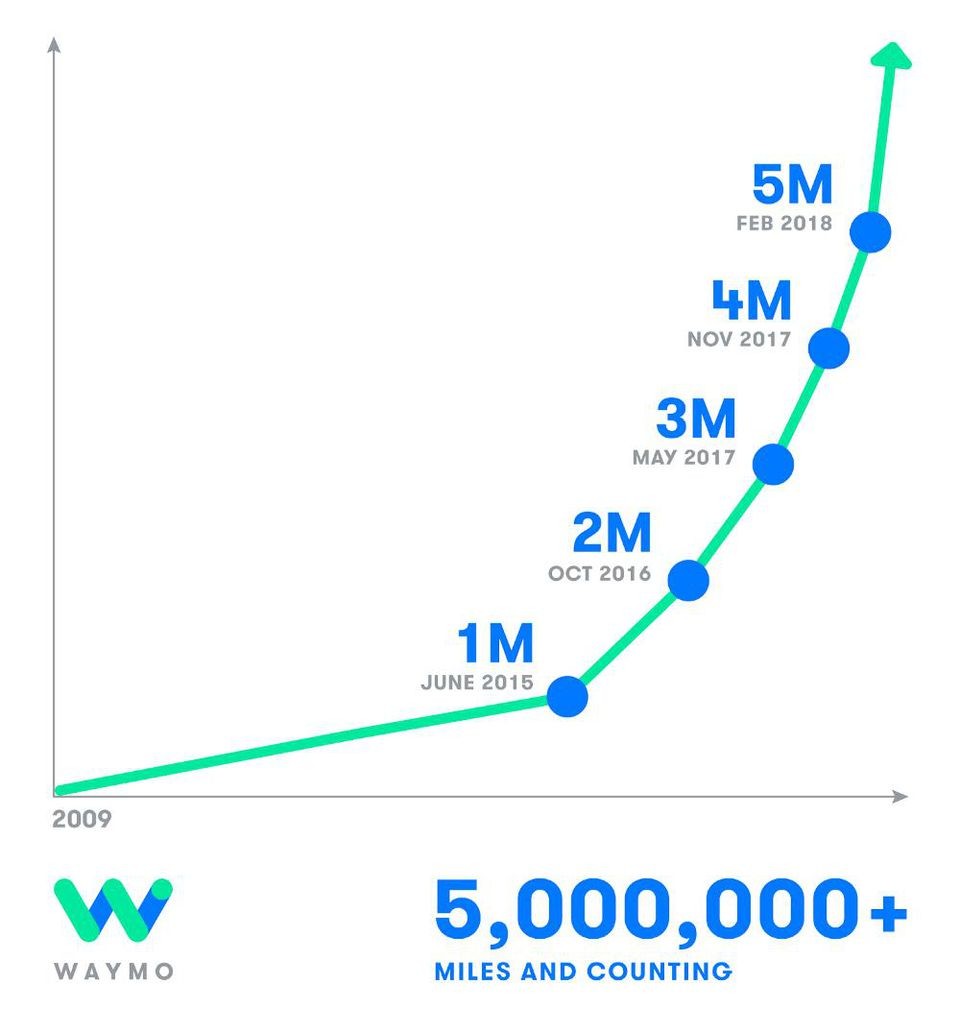

Much is made of the millions public-road miles that Waymo’s cars have driven autonomously. Arnoud described this as the equivalent of about 300 years of human driving experience and 160 times around the globe. Real world driving is critical, he said, but what is more important is the ability to simulate.

Simulation is critical because it allows for Waymo to test each new iteration of software against all previously-driven miles. Even more important is the ability to test against “fuzzed” versions of those millions of miles, such as seeing how the software would handle cars going at slightly different speeds, an extra car, pedestrians crossing in front of the car and so on. Arnoud described Waymo’s simulation-based testing capability as the equivalent of 25,000 virtual cars driving 2.5 billion real and modified miles in 2017.

The third component of Waymo’s testing program is its structured testing program. Arnoud said that there is a “long tail” of driving situations that happen very rarely. Rather than trying to encounter every possibility in real-world driving, Waymo set up a 90-acre mock city at the decommissioned Castle Air Force base where it can test its cars against such edge cases. These tests are then fed into the simulation engine and fuzzed to create variations for more testing.

5. Waymo’s next steps are big (and hard) ones.

Arnoud closed with a discussion of the engineering challenges in front of Waymo. He described two big next steps.

One next step is expanding the “operational design domains” (ODD) of the cars. This includes expanding into “dense urban cores,” such as San Francisco (in which Waymo recently announced it is expanding its testing program). The other ODD was additional weather conditions, such as hard rain, snow and fog. (Waymo CEO John Krafcik recently told an audience that he was “jumping up and down” recently when it snowed 12 inches near Detroit, because it would enable Waymo’s testing in snow.)

See also: 7 Steps for Inventing the Future

The other area of focus was what Arnoud called “semantic understanding.” As an example, he pointed to the chaotic Place de l'Étoile traffic circle around the Arc de Triomphe in Paris. The circle is a meeting point of 12 roads and notoriously difficult to navigate. Arnoud says he has driven it many times without incident, however, and that such situations require a lot more than perception and vehicle operating skills. They require deep understanding of local rules and expectations. They also require constant communication and coordination with other drivers, including signals, gestures and so on. This kind of deep reasoning is key to numerous edge cases and improving the general abilities of driverless cars.

* * *

While Waymo has clearly made tremendous progress towards the driverless future, Arnoud closed his presentation by emphasizing the engineering infrastructure and the complexities of scaling that have to be addressed in order to turn driverless cars into safe production systems.

How far along is Waymo in the last 90% of that industrialization process? Arnoud never said. But, to put a point on the complexities, he showed a closing video of a Waymo car stopped at an intersection as a gaggle of kids bounced on frogger sticks across the street on all sides of the car. Some things are waiting for, he seemed to imply.

Arnoud’s remarks were given at a guest lecture at Lex Fridman's MIT class on “Deep Learning for Self-Driving Cars.” He offered technical insights on the history of the Waymo program, how it is applying artificial intelligence and deep learning and how it is moving from demo to industrial-strength product.

The Waymo engineer’s lecture goes beyond most Waymo management presentations and press events. He provides vivid details on the complexity of the effort to date and insight on challenges to come—both for Waymo and for those trying to catch up to its pioneering efforts.

Here are 5 takeaways, though I recommend watching the entire presentation.

1. Industrialization requires 10x the effort.

Arnoud emphasized the large amount of work needed to go from a demo that works in a lab to an industrialized product that is safe to put on the road: “You need to 10x the capabilities of your technology. You need to 10x your team size, including finding effective ways for more engineers and more researchers to collaborate. You need to 10x the capabilities of your sensors. You need to 10x the overall quality of the system, including your testing practices.”

2. Deep learning enabled algorithmic breakthroughs.

Arnoud noted that deep learning techniques were much less advanced in 2010 when Google started its work on self-driving cars. But, in the years since, deep learning has advanced to enable algorithmic breakthroughs in several critical areas for autonomous driving, including mapping, perception and scene understanding.

Arnoud gave numerous examples, such as using deep learning to analyze street imagery to extract street names, house numbers, traffic lights and traffic signs. The ability to precompute such data and store them as maps in the car saves precious onboard computing power for real time tasks.

See also: When Will the Driverless Car Arrive?

Deep learning is driving breakthroughs in real-time tasks as well, such as analyzing sensor data to identify traffic signals, other vehicles, obstacles, pedestrians, and so on. Deep learning capabilities also help in anticipating possible behavior of other drivers, cyclists and pedestrians, and driving accordingly.

Arnoud’s remarks were given at a guest lecture at Lex Fridman's MIT class on “Deep Learning for Self-Driving Cars.” He offered technical insights on the history of the Waymo program, how it is applying artificial intelligence and deep learning and how it is moving from demo to industrial-strength product.

The Waymo engineer’s lecture goes beyond most Waymo management presentations and press events. He provides vivid details on the complexity of the effort to date and insight on challenges to come—both for Waymo and for those trying to catch up to its pioneering efforts.

Here are 5 takeaways, though I recommend watching the entire presentation.

1. Industrialization requires 10x the effort.

Arnoud emphasized the large amount of work needed to go from a demo that works in a lab to an industrialized product that is safe to put on the road: “You need to 10x the capabilities of your technology. You need to 10x your team size, including finding effective ways for more engineers and more researchers to collaborate. You need to 10x the capabilities of your sensors. You need to 10x the overall quality of the system, including your testing practices.”

2. Deep learning enabled algorithmic breakthroughs.

Arnoud noted that deep learning techniques were much less advanced in 2010 when Google started its work on self-driving cars. But, in the years since, deep learning has advanced to enable algorithmic breakthroughs in several critical areas for autonomous driving, including mapping, perception and scene understanding.

Arnoud gave numerous examples, such as using deep learning to analyze street imagery to extract street names, house numbers, traffic lights and traffic signs. The ability to precompute such data and store them as maps in the car saves precious onboard computing power for real time tasks.

See also: When Will the Driverless Car Arrive?

Deep learning is driving breakthroughs in real-time tasks as well, such as analyzing sensor data to identify traffic signals, other vehicles, obstacles, pedestrians, and so on. Deep learning capabilities also help in anticipating possible behavior of other drivers, cyclists and pedestrians, and driving accordingly.

3. Synergy with other Google units is key to Waymo’s progress.

Arnoud acknowledged the importance of Google’s “whole machine learning ecosystem” to Waymo’s progress. This includes the seminal software advances by the Google Brain team and on-going collaboration with other Google teams working on deep learning at scale, such as in vision, speech, natural language processing and maps. The Google ecosystem also provides specialized infrastructure and tools for machine learning. This includes accelerators, data centers, labelled datasets and research that support Google’s TensorFlow programming paradigm.

4. Waymo’s testing program might be its secret sauce.

Arnoud emphasized that however great Waymo’s algorithms, sensors and overall package might be, driverless cars are still complex, embedded, real-time robotic systems that must work safely with imperfect data in an unpredictable world. He highlighted Waymo’s three-prong testing program of real-world driving, simulation and structured testing as key to iterating on and productizing the technology.

Much is made of the millions public-road miles that Waymo’s cars have driven autonomously. Arnoud described this as the equivalent of about 300 years of human driving experience and 160 times around the globe. Real world driving is critical, he said, but what is more important is the ability to simulate.

3. Synergy with other Google units is key to Waymo’s progress.

Arnoud acknowledged the importance of Google’s “whole machine learning ecosystem” to Waymo’s progress. This includes the seminal software advances by the Google Brain team and on-going collaboration with other Google teams working on deep learning at scale, such as in vision, speech, natural language processing and maps. The Google ecosystem also provides specialized infrastructure and tools for machine learning. This includes accelerators, data centers, labelled datasets and research that support Google’s TensorFlow programming paradigm.

4. Waymo’s testing program might be its secret sauce.

Arnoud emphasized that however great Waymo’s algorithms, sensors and overall package might be, driverless cars are still complex, embedded, real-time robotic systems that must work safely with imperfect data in an unpredictable world. He highlighted Waymo’s three-prong testing program of real-world driving, simulation and structured testing as key to iterating on and productizing the technology.

Much is made of the millions public-road miles that Waymo’s cars have driven autonomously. Arnoud described this as the equivalent of about 300 years of human driving experience and 160 times around the globe. Real world driving is critical, he said, but what is more important is the ability to simulate.

Simulation is critical because it allows for Waymo to test each new iteration of software against all previously-driven miles. Even more important is the ability to test against “fuzzed” versions of those millions of miles, such as seeing how the software would handle cars going at slightly different speeds, an extra car, pedestrians crossing in front of the car and so on. Arnoud described Waymo’s simulation-based testing capability as the equivalent of 25,000 virtual cars driving 2.5 billion real and modified miles in 2017.

The third component of Waymo’s testing program is its structured testing program. Arnoud said that there is a “long tail” of driving situations that happen very rarely. Rather than trying to encounter every possibility in real-world driving, Waymo set up a 90-acre mock city at the decommissioned Castle Air Force base where it can test its cars against such edge cases. These tests are then fed into the simulation engine and fuzzed to create variations for more testing.

5. Waymo’s next steps are big (and hard) ones.

Arnoud closed with a discussion of the engineering challenges in front of Waymo. He described two big next steps.

Simulation is critical because it allows for Waymo to test each new iteration of software against all previously-driven miles. Even more important is the ability to test against “fuzzed” versions of those millions of miles, such as seeing how the software would handle cars going at slightly different speeds, an extra car, pedestrians crossing in front of the car and so on. Arnoud described Waymo’s simulation-based testing capability as the equivalent of 25,000 virtual cars driving 2.5 billion real and modified miles in 2017.

The third component of Waymo’s testing program is its structured testing program. Arnoud said that there is a “long tail” of driving situations that happen very rarely. Rather than trying to encounter every possibility in real-world driving, Waymo set up a 90-acre mock city at the decommissioned Castle Air Force base where it can test its cars against such edge cases. These tests are then fed into the simulation engine and fuzzed to create variations for more testing.

5. Waymo’s next steps are big (and hard) ones.

Arnoud closed with a discussion of the engineering challenges in front of Waymo. He described two big next steps.

One next step is expanding the “operational design domains” (ODD) of the cars. This includes expanding into “dense urban cores,” such as San Francisco (in which Waymo recently announced it is expanding its testing program). The other ODD was additional weather conditions, such as hard rain, snow and fog. (Waymo CEO John Krafcik recently told an audience that he was “jumping up and down” recently when it snowed 12 inches near Detroit, because it would enable Waymo’s testing in snow.)

See also: 7 Steps for Inventing the Future

The other area of focus was what Arnoud called “semantic understanding.” As an example, he pointed to the chaotic Place de l'Étoile traffic circle around the Arc de Triomphe in Paris. The circle is a meeting point of 12 roads and notoriously difficult to navigate. Arnoud says he has driven it many times without incident, however, and that such situations require a lot more than perception and vehicle operating skills. They require deep understanding of local rules and expectations. They also require constant communication and coordination with other drivers, including signals, gestures and so on. This kind of deep reasoning is key to numerous edge cases and improving the general abilities of driverless cars.

* * *

While Waymo has clearly made tremendous progress towards the driverless future, Arnoud closed his presentation by emphasizing the engineering infrastructure and the complexities of scaling that have to be addressed in order to turn driverless cars into safe production systems.

One next step is expanding the “operational design domains” (ODD) of the cars. This includes expanding into “dense urban cores,” such as San Francisco (in which Waymo recently announced it is expanding its testing program). The other ODD was additional weather conditions, such as hard rain, snow and fog. (Waymo CEO John Krafcik recently told an audience that he was “jumping up and down” recently when it snowed 12 inches near Detroit, because it would enable Waymo’s testing in snow.)

See also: 7 Steps for Inventing the Future

The other area of focus was what Arnoud called “semantic understanding.” As an example, he pointed to the chaotic Place de l'Étoile traffic circle around the Arc de Triomphe in Paris. The circle is a meeting point of 12 roads and notoriously difficult to navigate. Arnoud says he has driven it many times without incident, however, and that such situations require a lot more than perception and vehicle operating skills. They require deep understanding of local rules and expectations. They also require constant communication and coordination with other drivers, including signals, gestures and so on. This kind of deep reasoning is key to numerous edge cases and improving the general abilities of driverless cars.

* * *

While Waymo has clearly made tremendous progress towards the driverless future, Arnoud closed his presentation by emphasizing the engineering infrastructure and the complexities of scaling that have to be addressed in order to turn driverless cars into safe production systems.

How far along is Waymo in the last 90% of that industrialization process? Arnoud never said. But, to put a point on the complexities, he showed a closing video of a Waymo car stopped at an intersection as a gaggle of kids bounced on frogger sticks across the street on all sides of the car. Some things are waiting for, he seemed to imply.

How far along is Waymo in the last 90% of that industrialization process? Arnoud never said. But, to put a point on the complexities, he showed a closing video of a Waymo car stopped at an intersection as a gaggle of kids bounced on frogger sticks across the street on all sides of the car. Some things are waiting for, he seemed to imply.