To Go Big (Data), Try Starting Small

Despite big opportunities, big data has thus far been more of a big dilemma, especially for healthcare institutions. Here is a new approach.

Despite big opportunities, big data has thus far been more of a big dilemma, especially for healthcare institutions. Here is a new approach.

Get Involved

Our authors are what set Insurance Thought Leadership apart.

|

Partner with us

We’d love to talk to you about how we can improve your marketing ROI.

|

Munzoor Shaikh is a director in West Monroe Partners' healthcare practice, with a primary focus on managed care, health insurance, population health and wellness. Munzoor has more than 15 years of experience in management and technology consulting.

Loss trends, stagnant interest rates, deteriorating reinsurance results and challenging regulatory issues are likely to have a negative impact.

Get Involved

Our authors are what set Insurance Thought Leadership apart.

|

Partner with us

We’d love to talk to you about how we can improve your marketing ROI.

|

Vince Capaldi is the president of the Bay Oaks Wholesale Brokerage, a national wholesale insurance broker specializing in self-insured workers’ compensation programs. Capaldi has developed and maintained numerous individual and group self-insurance plans in both the public and private sectors nationwide.

More than half of car accidents may now stem from phone-related distracted driving, according to a survey of agents -- a huge increase.

Get Involved

Our authors are what set Insurance Thought Leadership apart.

|

Partner with us

We’d love to talk to you about how we can improve your marketing ROI.

|

A P3 model (Public Private Partnership) can let governments invest in infrastructure while transferring risk in new ways.

Get Involved

Our authors are what set Insurance Thought Leadership apart.

|

Partner with us

We’d love to talk to you about how we can improve your marketing ROI.

|

Tariq Taherbhai is senior director at Aon Infrastructure Solutions, Aon’s global risk advisory group for alternative project delivery (APD)/public-private partnerships (PPP).

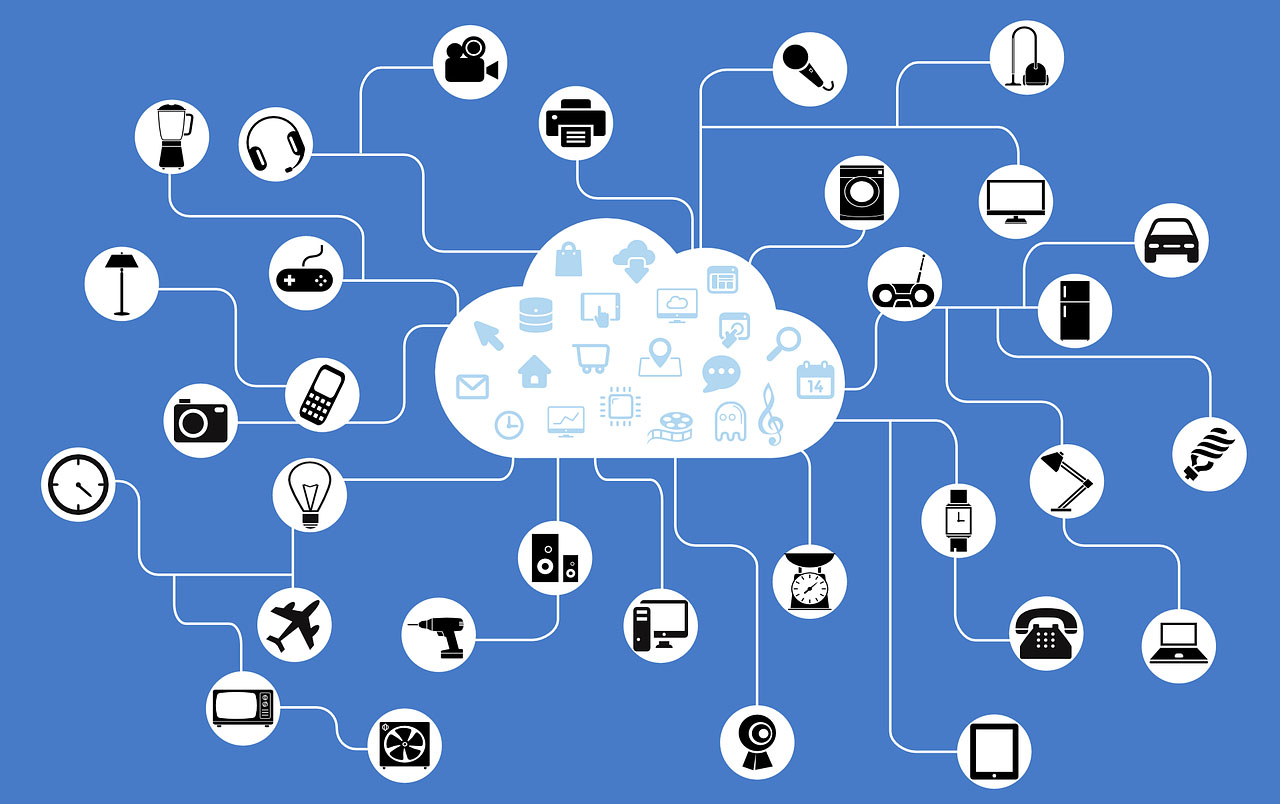

More than 300 insurers surveyed say the IoT's biggest impact will be on "behavior steering" among customers.

See the full infographic here.

See the full infographic here.

Get Involved

Our authors are what set Insurance Thought Leadership apart.

|

Partner with us

We’d love to talk to you about how we can improve your marketing ROI.

|

Marsha Irving is the head of financial services for FC Business Intelligence. She advises the business on new opportunities and future direction through trend-spotting, in-depth market research and written analysis. Irving works across a variety of industry verticals developing new products, all the way from conception to launch.

I'm cranky on the subject of the smart home because I've been hearing variations on this theme for 25 years without seeing a result.

It's nice to know sharp people -- in this case, Rich Jaroslovsky, a former colleague at the Wall Street Journal who is now a vice president at SmartNews. He just wrote a takedown of the smart home that saved me the trouble.

I had visited the topic in a general way a year ago in an article taking issue with something Google's executive chairman, Eric Schmidt, had said about how the Internet will disappear. My basic complaint about how even really smart people think about automation is that automation is often more trouble than it's worth and that people blithely assume I'd like to automate decisions that, in fact, I don't want automated -- no, I don't want my refrigerator ordering milk for me, my lights to always flip on a certain way when I walk through the door or my TV to always turn to ESPN when I wake up.

Recent stories about the glories of the smart home made me think I needed to return to the subject, more specifically this time -- I'm cranky on the subject of the smart home because I've been hearing variations on this theme for 25 years without seeing a result; no, Nest doesn't count. I was prompted into action when I received the following in an email this morning:

"Many large U.S. insurers are bracing for the impact of autonomous driving on their business, but they have yet to grasp that the same trend is at play in the homeowners and renters insurance markets. Insurers that don’t develop a value proposition around the connected home will be forced to give steeper discounts to reflect the lower risks without generating any strategic benefits. Savvy insurers that adapt to the new dynamic have a historic opportunity to become far more relevant than they are today.

"Based on over 100... discussions conducted between November 2015 and February 2016 with smart-home technology vendors; P&C, health, and life insurers; venture capital firms; and technology vendors, this report examines the connected-home use case for the insurance industry, profiles two turnkey smart-home... and mentions 147 other firms." [I deleted three corporate names in there, including the author of the report, because I don't see any need to make this personal, even though you're expected to pay real money for that report.]

Just when I was gearing up to write something on the smart home, though, I saw that Rich had posted his column, which begins:

"With every new smart device I add to my home, it gets a little dumber.

"The thermostats don’t talk to the lights. The security cameras don’t talk to the alarm system, which doesn’t talk to the garage door. The networked speakers talk to each other—but not to the TV sitting a few feet away. Just about every device has its own app for my smartphone, but since none of them work with each other, I’ve got 15 apps controlling 15 functions."

I encourage you to read the whole piece, especially if you harbor hopes that the smart home is a looming opportunity. As Rich notes, you can't have a connected home if the devices don't talk to each other. And while I may have a "standard" for communication, if Rich has a separate standard and so do 87 others of you, then we don't, in fact, have a standard way of communicating.

We'll get to the smart home.

But not soon.

Get Involved

Our authors are what set Insurance Thought Leadership apart.

|

Partner with us

We’d love to talk to you about how we can improve your marketing ROI.

|

Paul Carroll is the editor-in-chief of Insurance Thought Leadership.

He is also co-author of A Brief History of a Perfect Future: Inventing the Future We Can Proudly Leave Our Kids by 2050 and Billion Dollar Lessons: What You Can Learn From the Most Inexcusable Business Failures of the Last 25 Years and the author of a best-seller on IBM, published in 1993.

Carroll spent 17 years at the Wall Street Journal as an editor and reporter; he was nominated twice for the Pulitzer Prize. He later was a finalist for a National Magazine Award.

The ACA creates a penalty for not purchasing health insurance -- but do the math. It's not really a penalty.

Get Involved

Our authors are what set Insurance Thought Leadership apart.

|

Partner with us

We’d love to talk to you about how we can improve your marketing ROI.

|

Mutual distributed ledgers, enabled by blockchain, are changing the nature of trust in financial services, with profound implications.

Get Involved

Our authors are what set Insurance Thought Leadership apart.

|

Partner with us

We’d love to talk to you about how we can improve your marketing ROI.

|

Michael Mainelli co-founded Z/Yen, the city of London’s leading commercial think tank and venture firm, in 1994 to promote societal advance through better finance and technology. Today, Z/Yen boasts a core team of 25 highly respected professionals and is well capitalized because of successful spin-outs and ventures.

Hospital mergers and acquisitions of physician practices keep driving up costs. It's high time we changed the equation.

Get Involved

Our authors are what set Insurance Thought Leadership apart.

|

Partner with us

We’d love to talk to you about how we can improve your marketing ROI.

|

Tom Emerick is president of Emerick Consulting and cofounder of EdisonHealth and Thera Advisors. Emerick’s years with Wal-Mart Stores, Burger King, British Petroleum and American Fidelity Assurance have provided him with an excellent blend of experience and contacts.

Many claim that the smart home represents a major shift, and opportunity, for insurers, but we're still way too early.

Get Involved

Our authors are what set Insurance Thought Leadership apart.

|

Partner with us

We’d love to talk to you about how we can improve your marketing ROI.

|

Paul Carroll is the editor-in-chief of Insurance Thought Leadership.

He is also co-author of A Brief History of a Perfect Future: Inventing the Future We Can Proudly Leave Our Kids by 2050 and Billion Dollar Lessons: What You Can Learn From the Most Inexcusable Business Failures of the Last 25 Years and the author of a best-seller on IBM, published in 1993.

Carroll spent 17 years at the Wall Street Journal as an editor and reporter; he was nominated twice for the Pulitzer Prize. He later was a finalist for a National Magazine Award.