This is Paper 5 of a series of five on the topic of risk appetite and associated questions. The author believes that enterprise risk management (ERM) will remain locked in organizational silos until boards comprehend the links between risk and strategy. This is achieved either through painful and expensive crises or through the less expensive development of a risk appetite framework (RAF). Understanding of risk appetite is in our view very much a work in progress for many organizations, but RAF development and approval can lead boards to demand action from executives.

Paper 1 is the shortest paper and makes a number of general observations based on experience working with a wide variety of companies.

Paper 2 describes the risk landscape, measurable and unmeasurable uncertainties and the evolution of risk management.

Paper 3 answers questions relating to the need for risk appetite frameworks and describes their relationship to strategy.

Paper 4 answers further questions on risk appetite and goes into some detail on the questions of risk culture and risk maturity. This paper, Paper 5, describes the characteristics of a risk appetite statement and provides a detailed summary of how to operationalize the links between risk and strategy.

What are the characteristics of an effective risk appetite statement?

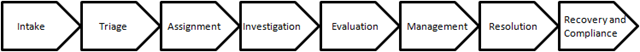

The purpose of a risk appetite statement (RAS) is to provide clear guidance to people, at all levels, of the ranges of risk within which they are required to operate in pursuit of objectives. An RAS exists within a risk appetite framework (RAF). The RAF is the ‘’overall approach including the policies, controls and systems, through which risk appetite is established, communicated and monitored.’’

[1]

As a particular RAS is devolved down through an organization, its content will change based on the intended recipients. For example, a RAS at:

- Group executive level will be high level and inclined toward expressing appetite for risks to objectives that deliver value and increase performance. The RAS will describe objectives, risks, expected returns and control(s) requirements,

- Middle management level will articulate levels of tolerance that, if breached, will require escalation and "circuit breaking" reports, with priority given to immediate interdictions and a review of internal controls,

- Business unit level will be more detailed and inclined toward expressing risk limits and internal controls.

A RAS that is not explicit and clearly communicated has limited value. For this reason, a RAS exists within a compendium of (risk appetite) statements that take their root at the intersection between a particular group-level objective and its associated subsidiary objective(s).

The RAF, like the strategic plan, is explictly approved by the board. Properly crafted and implemented, it has powerful utility to directors in that the RAS approval process requires a series of linear RAF discussions. Wisely conducted, these discussions can result in a peeling back of the many layers of complexity associated with operational drivers and the business model. Independent, non-executive directors (INEDs), in particular, can find this immensely useful as most INEDs will typically only possess a relatively superficial understanding of the principal operational exigiencies that drive performance.

The RAF discussions will include discussions on:

- Explictly stated objectives[2] and where they reside on the risk appetite continuum,

- The associated subsidiary objectives[3] and where they reside on the risk appetite continuum,

- First RAS drafts at group and subsidiary levels,

- RAS approvals, once operational and business model implications are fully understood and satisfied.

RAF template headings:

RMI offers frequently used headings that we use in helping organizations develop their RAFs.

- Mission/purpose/mandate:

a. Large, privately held companies will have clearly established and communicated mission statements, etc.

b. For a large number of regulated entities in Ireland, this will reflect the goal set by the parent for the subsidiary,

c. For public companies, this will be reflected in the legislation establishing the entity,

2. Strategic initiatives:

a. Very many organizations will not have a board-approved, 10-15 year strategic plan. Rather, they will have business plans within which various strategic initiatives are either implied or explicitly stated,

b. The development of a strategic plan is outside of the scope of a RAF, but each document informs the other,

3. Board (risk committee) statement of risk assurance requirements: This is a prescriptive statement addressing a wide range of requirements and would include the following, among others:,

a. Objectives that are clearly articulated, aligned with strategy and performing to expectations,

b. Risks to objectives that are identified, assessed and evaluated against approved risk criteria,

c. Risk treatment plans that are executed efficiently and effectively, increasing the likelihood of achieving objectives,

4.Objectives: As discussed above,

5. Risk appetite continuum: five-level continuum against which company (group and subsidiary) objectives are mapped relative to appetites for risk (from very high to very low)

6. Risk appetite statements:

a. Overall group RAS

b. Objectives level RASs’

c. Risk treatment level RASs’[4]

7. Risk criteria tables (risk tolerances and limits)

a. Five levels (substantial, down to negligible impacts),

b. Measurable risk limits[5]

c. Measurable risk tolerances.

How can organizations ensure that RAFs are both actionable and measurable?

The RAF is to the board of directors what risk management is to the rest of the organization. As such, there is a direct correlation between the efficacy of the RAF and the efficacy of the risk management framework. Ensuring that RAFs are both actionable and measurable requires an understanding of how boards work in this particular context.

When RMI converses with board members and the executive, we share what we call the RMI "Tell me, Show me, Prove it to me" questions.

Questions will vary from company to company, but broad results in terms of an informal scoring that we would thereafter apply do not vary greatly.

For example:

- Tell me: (Score: 3/10)

- How you relate your strategic plan to critical objectives and their associated key performance indicators (KPIs),

- About your board audit/risk charter,

- Risk management framework.

We are told about external attestation (sometimes exemplary), policies, board committees and rich processes.

- Show me: (Score: 5/10)

- Your strategic plan/objectives statements,

- Your risk register and how it links to objectives, KPIs and threats/risks to the enterprise,

- Your risk appetite statements,

- Your risk treatment plans,

- Your top five contingency plans.

We find that most of these documents do not always exist and that the Excel spreadsheets, word documents and Power Points (invariably with differing formats for different parts of the organization) make no consistent reference to objectives, other than obliquely. In addition, we find that original risk reports are edited on multiple occasions as they travel from original risk owners to the executive and the board.

- Prove to me that: (Score: 2/10)

- Your risk register is not just a list of risks,

- Top 10 risks are the real top 10,

- Risk owners actually provide input to the flow of information and ultimately to the risk register,

- Known issues and risks on the ground can be escalated to decision makers, without jeopardy to the originators of information,

- Dynamic risks can be aggregated in real time and with confidence because of your data governance practices,

- Your crisis management team (CMT)[6] is developed and capable.

We find that risk data governance is so poor that answers to these questions can only be determined after manual searches over a number of days. This is compounded when, invariably, we also find that managers have not been adequately trained in the use of common language, risk management processes or board risk-assurance requirements. Furthermore, we find that ‘’risk culture’’ is such that people are disinclined to speak up with regard to matters giving them cause for concern lest they jeopardize relationships with colleagues and their next reports.

We therefore recommend that fundamental questions for the CEO and INEDS should include:

- What demonstrable evidence do you have that your top five group risks are the right top five?

- Can you monitor threats and risks to objectives in real time, and what kind of dynamic tests can you run on your red flags?

- What proofs do you have that management is capable of switching from business as usual, to delivery of credible solutions to stakeholders under abnormal/adverse conditions?

- Where are you in terms of risk maturity, and how do you know?

RMI also recommends the following framework, which summarizes how to ‘’Operationalize the links between Risk and Strategy,’’ ensuring that RAFs are measurable and actionable.

The framework is summarized as follows:

- Reporting to the CEO:

Strategy/Risk Program Office reporting to the CEO and Board Audit/Risk Committee, with:

- Focus 1: Defend operations, reputation, business model,

- Focus 2: Exploit opportunities faster than less adaptive competitors.

2. Board Audit/Risk Committee:

Executing responsibilities with regard to risk in the manner described earlier in this paper and in particular as described in the RMI answer to the FAQ: "What are characteristics of an effective risk appetite statement?"

3. Data Governance: Putting System to Process:

Understanding the significance of integrating:

- Executive and management (risk) training;

- Inclusion of risk management KPIs in annual appraisals, and

- Deployment of a database solution designed and specified to the ISO 31000 series

(Note: Lessons learned from the global financial crisis include that database solutions, by themselves, are not the solution. The adage, "poor information input, misinformation output," is appropriate and reminds us that tools and techniques in the wrong hands can precipitate disaster.)

4. Library of Responses to Top 5-10 Threat/Opportunity Rehearsals

Seminal works that have been undertaken include:

- 1996: The Impact of Catastrophes on Shareholder Value: Rory F. Knight & Deborah J. Pretty, The Oxford Executive Research Briefings, Templeton College, University of Oxford, Oxford OX1 5NY, England[7].

What contributed to catastrophic failure?

- Poor crisis management,

- Failure to recognize the significance of the event early enough in the crisis,

- Poor stakeholder communications, including with news and social media,

- Lack of awareness of the potential for reputational damage,

- Failure to appreciate the importance of transparency early enough,

- Failure to learn from prior experience (even with the same company).

Resilient Companies:

- Have exceptional risk radar,

- Build effective internal and external networks,

- Review and adapt based on excellent communications,

- Have the ability to respond rapidly and flexibly,

- Have diversified resources.

These separate and unrelated studies similarly conclude that management’s capability to defend operations, the business model and reputation are mission-critical to sustainable performance in the 21

st century

In conclusion, it is our view that operationalizing the links between risk and strategy in the manner outlined above will, with positive CEO and board endorsement, fulfill the role of the board as concluded by the Financial Reporting Council (FRC) report: Boards and Risk: A Summary of Discussions with Companies, Investors and Advisors, September 2011.

References

[1]http://www.financialstabilityboard.org/publications/r_131118.htm.

[2] Strategic plans and business plans without explicitly stated objectives have no meaning.

[3] Theoretically, objectives are devolved from group to subsidiary boards. In reality, what happens is that group and subsidiary executives and directors (the latter through respective risk committees) engage in operational discussions directed at ensuring understanding, thus increasing likelihood of success.

[4] Properly constructed risk treatments are the leading indicators of the future state of health of objectives. As such, risk treatments are at the cutting edge of the management of risks to objectives.

[5] Dr. Peter Drucker: ‘’ If it can’t be measured, it can’t be managed." As with determination of leading indicators in balanced score cards, these can often be difficult to establish.

[6] CMTs are activated when issues and events that threaten to overpower operations, the business model or reputation arise.